Multi-Level Gated U-Net for Denoising TMR Sensor-Based MCG Signals

New AI model dramatically cleans up heart signals from cheap sensors for better medical use.

Background & Academic Lineage

The Origin & Academic Lineage

Magnetocardiography (MCG) is a non-invasive technique for mapping the heart's electrical activity by measuring the magnetic fields it generates. Historically, the "gold standard" for this has been the Superconducting Quantum Interference Device (SQUID). While SQUIDs offer incredible sensitivity, they require liquid helium cooling and cost roughly USD 1 million, which makes them impractical for widespread clinical use. Optically Pumped Magnetometers (OPMs) are a newer alternative but involve complex optical setups and strict magnetic shielding requirements that drive up maintenance costs.

Tunnel Magnetoresistance (TMR) sensors emerged as a cost-effective, room-temperature alternative. However, they suffer from a significant "pain point": they exhibit high levels of $1/f$ electrical noise (0.1–100 Hz) and are highly susceptible to environmental interference. Previous denoising methods, such as digital filters or Empirical Mode Decomposition (EMD), struggle to handle this non-stationary noise while preserving the subtle, low-amplitude features of the cardiac cycle (like P-waves and T-waves). Furthermore, existing deep learning models designed for ECG (electrocardiogram) are often suboptimal for MCG because MCG noise profiles—specifically the $1/f$ noise—differ fundamentally from the baseline drift and muscle artifacts found in ECG data. The authors developed MGU-Net to specifically bridge this gap by leveraging the periodic nature of cardiac signals to suppress irregular noise.

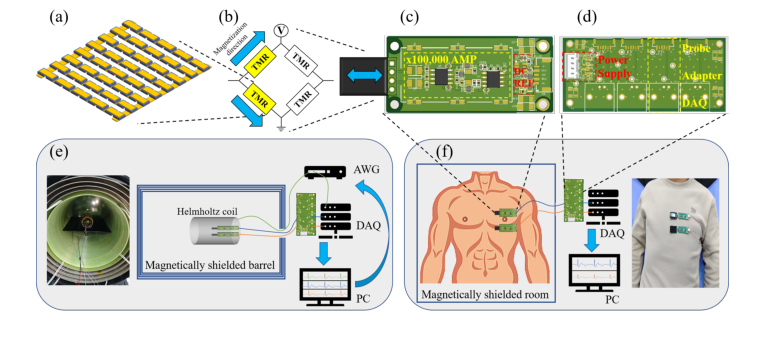

Figure 2. TMR-based MCG system and experiment setups. (a) A TMR sensor array composed of magnetic tunnel junctions (MTJs). (b) Four TMR elements in a Wheat- stone bridge configuration for converting magnetic signals into differential voltage. (c) TMR sensor integrated with a 100dB amplifier and power supply circuit. (d) 4-channel adapter module connecting the power supply and amplifier to the data acquisition sys- tem (DAQ). (e) The experiment setup for obtaining simulated data. (f) The experiment setup for obtaining real data

Figure 2. TMR-based MCG system and experiment setups. (a) A TMR sensor array composed of magnetic tunnel junctions (MTJs). (b) Four TMR elements in a Wheat- stone bridge configuration for converting magnetic signals into differential voltage. (c) TMR sensor integrated with a 100dB amplifier and power supply circuit. (d) 4-channel adapter module connecting the power supply and amplifier to the data acquisition sys- tem (DAQ). (e) The experiment setup for obtaining simulated data. (f) The experiment setup for obtaining real data

Intuitive Domain Terms

- Tunnel Magnetoresistance (TMR) Sensor: Think of this as a highly sensitive "magnetic microphone." Just as a microphone picks up sound waves, this sensor picks up the tiny magnetic "whispers" of the heart.

- Gated Linear Unit (GLU): Imagine a smart filter or a "gatekeeper" in a building. It looks at incoming data and decides which parts are important (the heart's rhythm) and which parts are just annoying background chatter (noise), letting only the important signals pass through.

- QRS Complex: This is the most prominent "spike" in a heartbeat signal. If a heartbeat were a mountain range, the QRS complex would be the tallest, sharpest peak, representing the main electrical contraction of the heart.

- $1/f$ Noise: Think of this as a persistent, low-frequency hum or "static" that gets louder the slower you listen. It is a common type of interference in electronic sensors that is particularly difficult to filter out because it mimics the slow, rhythmic nature of biological signals.

Notation Table

| Variable | Description |

|---|---|

| $T$ | The length of the MCG signal sample (number of time points). |

| $D$ | The feature dimension of the MCG signal. |

| $X_{\text{in}}$ | The input MCG feature sequence, where $X_{\text{in}} \in \mathbb{R}^{T \times D}$. |

| $X_{\text{out}}$ | The denoised output signal produced by the model. |

| $f_1, f_2$ | Learnable linear mapping functions within the GLU module. |

| $\theta_W, \theta_V$ | Parameters (weights) for the linear mappings $f_1$ and $f_2$. |

| $\sigma$ | The activation function (e.g., sigmoid or softmax) used for gating. |

| $\odot$ | The element-wise multiplication operator used in the gating mechanism. |

The authors address the denoising problem by replacing the standard self-attention (SA) mechanism—which they argue introduces redundant parameters—with a Gated Linear Unit (GLU).

In standard self-attention, the model computes:

$$X_{\text{out}} = \text{softmax} \left( \frac{QK^\top}{\sqrt{d_k}} \right) V$$

This requires separate projections for Query ($Q$) and Key ($K$), which the authors suggest leads to suboptimal convergence for periodic MCG signals. Instead, they propose a GLU-based approach:

$$X_{\text{out}} = \sigma (f_1(X_{\text{in}}; \theta_W)) \odot f_2(X_{\text{in}}; \theta_V)$$

Here, the model uses two parallel pipelines ($f_1$ and $f_2$) to process the input. The gating mechanism, controlled by $\sigma$, acts as an adaptive filter. By using a Competitive Gating (CG) module (where $\sigma$ is a softmax function), the model learns to weight global periodic features—like the QRS complex—more heavily across the entire sequence. By using a Noise Gating (NG) module (where $\sigma$ is a sigmoid function), the model performs preliminary suppression of random noise. This dual-gating approach allows the network to effectively "clean" the signal by amplifying the recurring cardiac patterns while simultaneously attenuating the irregular, non-periodic noise components that plague TMR sensor data. The model is trained using a mean squared error (MSE) loss, which minimizes the difference between the noisy input and the ground-truth signal, effectively teaching the network to reconstruct the "true" cardiac waveform from the noisy, raw data. The result is a robust system that recovers subtle features like P-waves and T-waves that were previously obscured by sensor noise.

Problem Definition & Constraints

Core Problem Formulation & The Dilemma

The Starting Point and Goal:

The input is a raw, long-sequence magnetocardiography (MCG) signal captured by Tunnel Magnetoresistance (TMR) sensors. These signals are heavily corrupted by high-level noise, specifically $1/f$ electrical noise (spanning $0.1-100$ Hz) and thermal agitation. The desired output is a clean, denoised signal where the subtle, clinically significant features—specifically the P-wave and T-wave—are clearly recovered from the noise floor, while maintaining the integrity of the QRS complex.

The Dilemma:

The fundamental trade-off lies in the conflict between noise suppression and feature preservation. Traditional signal processing methods (like digital filters or empirical mode decomposition) often struggle with non-stationary noise; they either fail to remove the noise effectively or, in the process of smoothing, inadvertently "wash out" the low-amplitude P and T waves, which are essential for diagnosing cardiac abnormalities. Furthermore, while deep learning models have succeeded in ECG denoising, they are optimized for different noise profiles (e.g., baseline drift or electrode motion). Applying these to TMR-based MCG signals results in suboptimal performance because the noise characteristics and sensor-specific artifacts are fundamentally different.

The Harsh Constraints:

1. Non-Stationary Noise: The noise is not constant; it exhibits irregular amplitude and frequency variations, making simple thresholding or static filtering ineffective.

2. Data Sparsity of Features: In raw TMR-based MCG, the P and T waves are often completely obscured by noise, leaving only the R-peak visible. The model must "hallucinate" or reconstruct these features based on learned periodic patterns rather than just filtering the input.

3. Computational Complexity: Processing long-sequence signals (containing multiple cardiac cycles) creates a massive computational burden. The authors had to balance the need for high-resolution feature extraction with the practical requirement of real-time inference (e.g., $5.06$ ms per sample on an RTX 4090).

4. Architectural Mismatch: Standard self-attention mechanisms, while powerful for long-range dependencies, introduce redundant parameters (like separate Query and Key projections) that can lead to poor convergence when dealing with the specific periodic nature of cardiac signals.

The authors bridge the gap between noisy input and clean signal by replacing the standard self-attention mechanism with a Gated Linear Unit (GLU).

In a standard self-attention mechanism, the output is computed as:

$$X_{\text{out}} = \text{softmax} \left( \frac{QK^\top}{\sqrt{d_k}} \right) V$$

where $Q, K, V$ are projections of the input $X_{\text{in}}$. The authors argue that this is inefficient for periodic MCG signals. Instead, they utilize a GLU, which performs gating via element-wise multiplication of two linear projections:

$$X_{\text{out}} = \sigma (f_1(X_{\text{in}}; \theta_W)) \odot f_2(X_{\text{in}}; \theta_V)$$

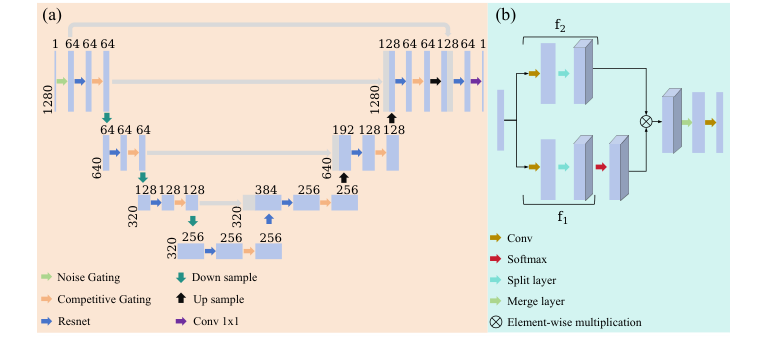

Figure 1. Structure of the proposed Multi-Level Gated U-Net model. (a) The whole architecture (b) The GLU module

Figure 1. Structure of the proposed Multi-Level Gated U-Net model. (a) The whole architecture (b) The GLU module

Here, $\sigma$ acts as the gating function. By using a Competitive Gating (CG) module (where $\sigma$ is a softmax function), the model learns to weight global periodic features, allowing the network to prioritize the recurring QRS complexes. By using a Noise Gating (NG) module (where $\sigma$ is a sigmoid function), the model performs preliminary suppression of random noise.

This hierarchical U-Net architecture allows the model to learn multi-scale representations, effectively compressing the signal to extract high-level features and then reconstructing it to restore the subtle cardiac waveforms. The combination of these gating mechanisms allows the model to systematically amplify periodic cardiac signatures while attenuating irregular noise, a clever way to bypass the limitations of standard convolutional or attention-based approaches.

Why This Approach

The authors of this paper faced a fundamental mismatch between existing deep learning solutions and the specific noise characteristics of Tunnel Magnetoresistance (TMR) sensors. While standard methods like Transformers or Diffusion models (e.g., DeScoD) excel at ECG denoising—which typically deals with baseline drift and muscle artifacts—they struggle with the $1/f$ electrical noise and non-uniform spectral decay inherent to TMR-based Magnetocardiography (MCG).

The Logic of the Approach

The authors identified that traditional "SOTA" methods were insufficient because they often treat signal denoising as a generic sequence-to-sequence task, failing to exploit the strong, inherent periodicity of the cardiac QRS complex. The "exact moment" of realization occurred when they observed that standard Self-Attention (SA) mechanisms introduced redundant parameters (via separate Query and Key projections) that led to suboptimal convergence when applied to the specific, repetitive structure of MCG signals.

Comparative Superiority and Structural Advantages

The MGU-Net is qualitatively superior to previous gold standards for several reasons:

- Gating vs. Attention: By replacing the standard SA mechanism with a Gated Linear Unit (GLU), the authors moved from a computationally expensive, parameter-heavy attention model to a more efficient gating mechanism. The GLU, defined as $X_{\text{out}} = \sigma (f_1(X_{\text{in}}; \theta_W)) \odot f_2(X_{\text{in}}; \theta_V)$, uses element-wise multiplication to act as an adaptive filter. This allows the model to "gate" out irregular noise while amplifying the periodic cardiac signatures.

- Hierarchical Feature Extraction: The U-Net architecture provides a structural advantage by enabling multi-scale feature learning. It captures both localized waveform details (like the subtle P and T waves) and global contextual patterns (the rhythm of the QRS complex) without the $O(N^2)$ memory complexity bottleneck associated with full-sequence self-attention in standard Transformers.

- Synergistic Design: The "marriage" between the problem and the solution lies in the integration of two specific gating variants:

- Noise Gating (NG): Uses a sigmoid activation to perform preliminary suppression of random, high-frequency noise.

- Competitive Gating (CG): Uses a softmax activation to globally weight the signal, ensuring that periodic cardiac features are prioritized across the entire sequence.

Why Alternatives Failed

The authors explicitly reject standard Transformer-based approaches because the redundant $Q/K$ projections in SA are unnecessary for signals with such strong self-correlation. Unlike GANs or basic CNNs, which might struggle to maintain the delicate morphology of the P and T waves under high-noise conditions, the MGU-Net’s gating mechanism is specifically tuned to the periodicity of the MCG signal. This allows it to outperform DeScoD and APR-CNN, which the authors demonstrate fail to restore the QRS complex in several cardiac cycles.

In summary, the MGU-Net is not just a "bigger" model; it is a specialized architecture that aligns its mathematical operations—specifically the gating of linear projections—with the physical reality of TMR sensor noise. This approach effectively reduces the computational burden while significantly improving the Signal-to-Noise Ratio (SNR) from roughly 3.9 dB to 14.5 dB on real datasets, proving that a tailored inductive bias is often more effective than a generic, high-capacity model in specialized biomedical engineering tasks.

Mathematical & Logical Mechanism

The MGU-Net (Multi-Level Gated U-Net) addresses the critical challenge of denoising magnetocardiography (MCG) signals acquired via Tunnel Magnetoresistance (TMR) sensors. Unlike SQUID-based systems, TMR sensors are cost-effective but suffer from high-frequency noise and $1/f$ noise, which obscure subtle cardiac features like P-waves and T-waves.

The Master Equation

The core logic of the Gated Linear Unit (GLU) module, which replaces the standard self-attention mechanism to better capture periodic cardiac patterns, is defined as:

$$X_{\text{out}} = \sigma (f_1(X_{\text{in}}; \theta_W)) \odot f_2(X_{\text{in}}; \theta_V)$$

Tearing the equation apart:

- $X_{\text{in}}$: The input MCG feature sequence of dimension $T \times D$ (time steps $\times$ feature dimension). It represents the raw, noisy signal segments.

- $f_1(\cdot; \theta_W)$ and $f_2(\cdot; \theta_V)$: These are learnable linear mappings (implemented via convolutional layers). They transform the input into two distinct feature spaces.

- $\sigma(\cdot)$: The activation function. In the "Noise Gating" (NG) module, this is a sigmoid function to suppress random noise. In the "Competitive Gating" (CG) module, this is a softmax function to compute global gating weights.

- $\odot$: The element-wise (Hadamard) product. This is the "gate." It acts as a dynamic filter where the output of $f_1$ determines the "importance" or "gain" of the features produced by $f_2$.

Step-by-Step Flow

- Input: A noisy 10-second MCG signal enters the network.

- Noise Gating (NG): The signal first passes through an NG module, which expands the channel dimension and uses a sigmoid-gated pipeline to perform preliminary suppression of random, non-periodic noise.

- Hierarchical Encoding: The signal traverses four downsampling stages. Each stage uses a ResBlock to extract local features and a Competitive Gating (CG) module to learn global periodic dependencies.

- Bottleneck: At the deepest level, the model aggregates high-level representations, capturing the global rhythm of the cardiac cycle.

- Decoding: Three upsampling stages restore the signal resolution. Features from the encoder are concatenated via skip connections to preserve fine-grained temporal details (like the P-wave).

- Output: A final $1 \times 1$ convolution collapses the channels to produce a single, clean denoised MCG signal.

Optimization Dynamics

The model learns by minimizing the Mean Squared Error (MSE) between the denoised output and the ground-truth signal. The optimization is driven by the Adam optimizer. The "learning" happens as the network adjusts the parameters $\theta_W$ and $\theta_V$ within the GLU modules. Because the MCG signal is highly periodic, the gradients effectively propagate the error signal back through the gating branches, forcing the model to align its internal "gate" with the timing of the cardiac cycles. This allows the model to distinguish between the stochastic, non-periodic noise (which is suppressed) and the structured, periodic cardiac signal (which is preserved).

Results, Limitations & Conclusion

The authors propose the Multi-Level Gated U-Net (MGU-Net). The architecture leverages two primary innovations:

1. Hierarchical U-Net Backbone: This allows the model to learn multi-scale representations, capturing both global rhythmic patterns and local waveform details.

2. Gated Linear Unit (GLU) Modules: Instead of standard self-attention, they use GLU modules defined as:

$$X_{\text{out}} = \sigma (f_1(X_{\text{in}}; \theta_W)) \odot f_2(X_{\text{in}}; \theta_V)$$

This gating mechanism effectively acts as an adaptive filter that amplifies the periodic cardiac signatures while suppressing irregular noise.

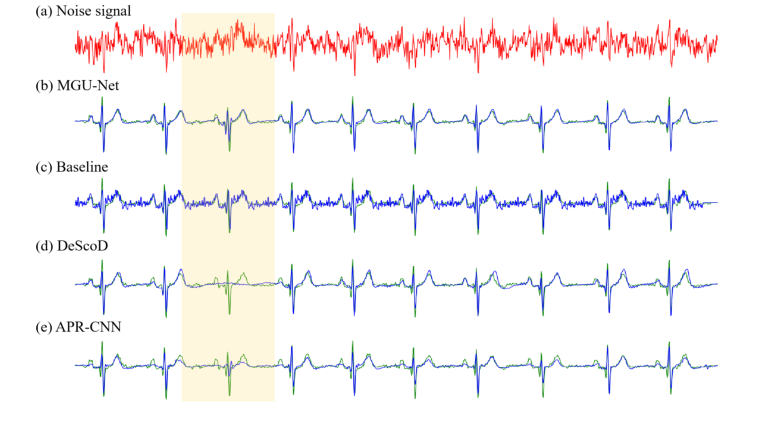

Figure 3. Denoising performance of different models on a single sample from the simulated dataset. The red line represents the noisy input signal, the green line represents the ground-truth signal, and the blue line represents the denoised signal. (a) The original noisy MCG signal. (b) Denoised signal by MGU-Net. (c) Denoised signal by Baseline. (d) Denoised signal by DeScoD. (e) Denoised signal by the APR-CNN model

Figure 3. Denoising performance of different models on a single sample from the simulated dataset. The red line represents the noisy input signal, the green line represents the ground-truth signal, and the blue line represents the denoised signal. (a) The original noisy MCG signal. (b) Denoised signal by MGU-Net. (c) Denoised signal by Baseline. (d) Denoised signal by DeScoD. (e) Denoised signal by the APR-CNN model

Experimental Validation

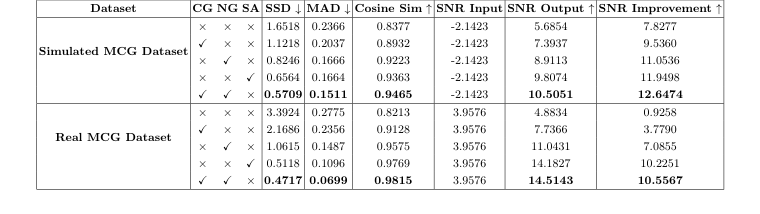

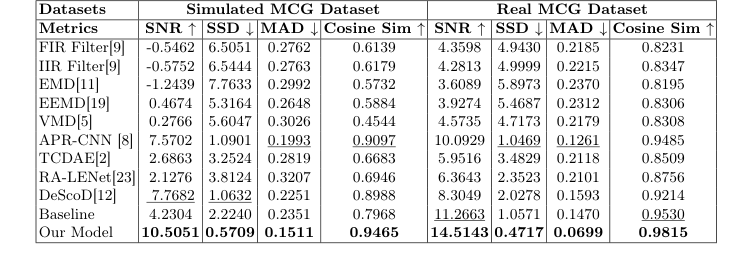

The authors ruthlessly tested their model against a suite of "victims," including traditional signal processing methods (FIR/IIR filters, EMD, VMD) and state-of-the-art deep learning baselines (APR-CNN, TCDAE, DeScoD). The evidence for their success is found in the significant SNR improvements. On the real-world dataset, they achieved an SNR of $14.514$ dB, compared to the next best competitor (DeScoD) at $8.3049$ dB. The ablation study provides the "smoking gun" evidence: by isolating the Noise Gating (NG) and Competitive Gating (CG) modules, they proved that the synergy between these two components is what drives the performance.

Discussion and Future Perspectives

This paper successfully demonstrates that specialized architectural inductive biases (like gating for periodicity) can outperform generic deep learning models in specialized hardware domains. To evolve these findings, I propose the following discussion topics:

- Generalization to Pathological Signals: The current study relies on healthy volunteers. How would the MGU-Net perform on patients with arrhythmias or myocardial ischemia, where the "periodic" nature of the QRS complex is fundamentally altered?

- Hardware-Algorithm Co-design: Since the noise profile is specific to TMR sensors, could we further improve performance by incorporating the physical sensor noise model directly into the loss function?

- Real-time Clinical Integration: While the inference speed is impressive (5.06 ms), clinical deployment requires rigorous validation of the model's uncertainty.

Table 2. Ablation studies of the proposed model on the simulated and real MCG datasets. The impact of Competitive Gating (CG) and Noise Gating (NG) modules are evaluated

Table 2. Ablation studies of the proposed model on the simulated and real MCG datasets. The impact of Competitive Gating (CG) and Noise Gating (NG) modules are evaluated

Table 1. Comparison on the Simulated and MCG Real Datasets

Table 1. Comparison on the Simulated and MCG Real Datasets