Experimental secure multiparty computation from quantum oblivious transfer with bit commitment

Secure multiparty computation enables collaborative computations across multiple users while preserving individual privacy, which has a wide range of applications in finance, machine learning and healthcare.

Background & Academic Lineage

The Origin & Academic Lineage

The problem addressed in this paper, secure multiparty computation (MPC), first emerged in the academic field of cryptography in the early 1980s. Pioneering work by Andrew Yao in 1982 and 1986 [1, 2] laid the theoretical groundwork, demonstrating how multiple parties could jointly compute a function on their private inputs without revealing those inputs to each other. This concept was motivated by a fundamental need for privacy-preserving collaboration, particularly in sensitive domains. For instance, financial institutions might want to detect fraud by comparing blacklists or suspicious transaction patterns without disclosing their entire client databases. Similarly, in privacy-preserving machine learning or genetic data analysis, organizations need to train models or perform analyses on shared sensitive data without exposing individual datasets.

A crucial building block for realizing MPC is Oblivious Transfer (OT) [6]. Classical OT protocols, which allow a sender to transmit data such that a receiver learns only a selected piece without the sender knowing the choice, typically rely on computational hardness assumptions [7, 8]. These assumptions, such as the difficulty of solving the RSA problem or discrete logarithm, form the bedrock of their security. However, the advent of quantum computing, particularly Shor's algorithm, revealed a significant "pain point": these classical cryptographic assumptions are vulnerable to quantum attacks. This vulnerability meant that the security of classical OT, and by extension, MPC built upon it, could be fundamentally broken by sufficiently powerful quantum computers.

This critical limitation spurred the exploration of quantum analogs to oblivious transfer, leading to the development of Quantum Oblivious Transfer (QOT) protocols [9]. Early QOT protocols, such as those extensively studied in the "noisy storage model" [13-15], offered a path towards quantum-secure communication. However, these previous QOT approaches had their own fundamental limitation: their security proofs often depended on restricting the adversary's technological capabilities, specifically assuming that the adversary had only noisy or bounded quantum memory [17]. This assumption is a significant weakness, as future advancements in quantum memory technology could render these protocols insecure. The authors of this paper were thus compelled to develop a QOT protocol that achieves security without such restrictive assumptions on the adversary's quantum memory, instead relying on robust cryptographic primitives like bit commitment to guarantee security against any classical or quantum attacks with polynomial time complexity. Furthermore, despite several experimental demonstrations of QOT, a significant pain point remained the lack of practical, real-world implementations of secure multiparty computation based on QOT, which this paper aims to address.

Intuitive Domain Terms

-

Secure Multiparty Computation (MPC): Imagine a group of friends who want to find out who among them has the highest income, but no one wants to reveal their actual salary to anyone else. MPC is like a special, trusted calculator that can process everyone's private salary information and output only the highest salary, without ever exposing any individual's specific income to the others. It's about collaborating on a calculation while keeping all individual inputs secret.

-

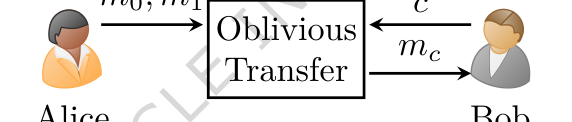

Oblivious Transfer (OT): Think of a digital vending machine that offers two secret messages, $m_0$ and $m_1$. You, as the receiver, can choose to receive either $m_0$ or $m_1$, and the machine will deliver your chosen message. The "oblivious" part means that the machine (the sender) will never know which message you picked, and you will never learn anything about the message you didn't pick. You get your desired item, and the sender remains unaware of your choice.

Figure 1. 1-out-of-2 Oblivious Transfer

Figure 1. 1-out-of-2 Oblivious Transfer

-

Bit Commitment: This is like sealing a secret prediction in a tamper-proof envelope. Before an event, you write down your prediction (a "bit" of information) on a piece of paper, seal it in an opaque envelope, and hand it to a trusted third party. This is the "commitment" phase. Later, after the event has occured, you can ask the third party to open the envelope and reveal your prediction. This is the "opening" phase. The key is that once committed, you cannot change your prediction, and the third party cannot learn your prediction until you decide to open it. This ensures that your prediction was made before the event and not altered.

-

Private Set Intersection (PSI): Consider two banks, each with a list of customer accounts. One bank has a blacklist of suspicious accounts, and the other has a list of new clients. They want to find out which new clients appear on the blacklist, but neither bank wants to reveal their entire list of accounts to the other. PSI is a cryptographic protocol that allows them to discover only the common accounts (the intersection) without disclosing any other information from either list. It's like finding common friends between two groups without revealing the full list of friends in each group.

Notation Table

| Notation | Description | Type |

|---|---|---|

| $x \in \{0,1\}^n$ | Alice's randomly chosen bit string for encoding | Variable |

| $\theta \in \{0,1\}^n$ | Alice's randomly chosen basis string for encoding | Variable |

| $|x\rangle_{\theta}$ | Polarization-encoded single-photon state sent by Alice | Variable |

| $\hat{\theta} \in \{0,1\}^n$ | Bob's randomly chosen basis string for measurement | Variable |

| $\hat{x} \in \{0,1\}^n$ | Bob's measurement outcomes | Variable |

| $c_i$ | Bob's commitment for the $i$-th round | Variable |

| $h$ | Cryptographic hash function (e.g., SHA-256) | Parameter |

| $r_i$ | Random bit string used by Bob in commitment $c_i$ | Variable |

| $T \subset [n]$ | Alice's randomly selected subset of rounds for testing | Variable |

| $\alpha$ | Ratio of test bits, $|T| = \alpha n$ | Parameter |

| $c \in \{0,1\}$ | Bob's choice bit, indicating which message he wants | Variable |

| $m_0, m_1$ | Alice's two messages, one of which Bob will receive | Variable |

| $m_c$ | The message Bob successfully receives (either $m_0$ or $m_1$) | Variable |

| Encode | Function for error-correcting code | Parameter |

| Decode | Function for decoding error-corrected messages | Parameter |

| $f_0, f_1$ | Hash functions for privacy amplification | Parameter |

| $\lambda$ | Length of the messages $m_0, m_1$ | Parameter |

| $n$ | Total number of single-photon states exchanged | Parameter |

| $N$ | Error correction code length | Parameter |

| $t$ | Maximum number of correctable bit flip errors | Parameter |

| $P_{\text{fpass}}$ | Probability of false failure during Alice's test | Parameter |

| $P_{\text{fcorrect}}$ | Probability of error correction failure | Parameter |

| $\text{cost}_{\text{cheat}}$ | Expected cost for a malicious Bob to successfully cheat | Parameter |

Problem Definition & Constraints

Core Problem Formulation & The Dilemma

The core problem addressed by this paper is the development and experimental demonstration of a Quantum Oblivious Transfer (QOT) protocol that is robustly secure against any quantum attacks, including those from adversaries possessing powerful quantum memory, and is practical for real-world Secure Multiparty Computation (MPC) applications.

- Input/Current State:

- Two or more parties (e.g., Alice and Bob) hold private datasets.

- They wish to jointly compute a function on these datasets (e.g., find common elements in a Private Set Intersection, PSI) without revealing any additional information about their private inputs to each other.

- Existing classical Oblivious Transfer (OT) protocols, which serve as a fundamental building block for MPC, rely on computational hardness assumptions (such as the RSA problem or discrete logarithm problem). These assumptions are known to be vulnerable to quantum attacks, specifically Shor's algorithm.

- Prior QOT protocols, while offering some level of quantum security, often depended on the "noisy storage model." This model assumes that an adversary has only limited or noisy quantum memory, a physical constraint that is becoming increasingly unrealistic with advancements in quantum technology.

- Output/Goal State:

- A QOT protocol that achieves security against any classical or quantum attacks, including those from adversaries with perfect, long-lived quantum memory. This means the security should not rely on assumptions about the adversary's technological limitations.

- The protocol's security should be based on more foundational and robust cryptographic primitives, specifically quantum-secure bit commitment schemes derived from collision-resistant hash functions.

- An experimental implementation of this QOT protocol that demonstrates its functionality and practicality for real-world MPC problems, such as enabling two banks to securely identify common suspicious accounts without disclosing other client data.

- Missing Link/Mathematical Gap: The precise missing link is a QOT protocol that bridges the gap between theoretical quantum security (which often relies on strong, sometimes unrealistic, physical assumptions) and practical, robust implementation. Previous QOT implementations were either theoretical or limited by the noisy storage model. This paper aims to provide a QOT protocol whose security is guaranteed by cryptographic primitives (bit commitment) rather than physical limitations, making it resilient to future quantum memory advancements, and then demonstrate its real-world applicability.

The central dilemma that has trapped previous researchers is the painful trade-off between achieving strong, unconditional quantum security and maintaining practical feasibility. Classical OT is efficient but quantum-vulnerable. Earlier QOT protocols offered quantum security but at the cost of relying on the "noisy storage model" assumption. This creates a dilemma: improving one aspect (e.g., quantum memory technology) directly breaks the security of protocols relying on the noisy storage model. The challenge is to design a QOT protocol that is both secure against an all-powerful quantum adversary (i.e., not limited by noisy storage) and still efficient enough to be implemented and used in real-world scenarios without prohibitive computational or communication overheads. The authors address this by leveraging bit commitment, which offers a stronger security foundation but introduces its own set of implementation complexities.

Constraints & Failure Modes

The authors encountered several harsh, realistic walls and constraints during the development and implementation of their QOT protocol:

-

Physical Limitations of Quantum Hardware:

- Imperfect Single-Photon Sources: Real-world quantum devices, such as Alice's laser source, do not emit perfect single photons. They typically approximate them using weak coherent states, which can lead to a non-zero probability of emitting multiple photons or no photons at all. This imperfection is a critical vulnerability that a malicious Bob could exploit (e.g., via Photon Number Splitting attacks).

- Transmission Loss and Detector Noise: Photons are inevitably lost during transmission through the quantum channel due to absorption and scattering. Additionally, Bob's single-photon detectors (SPDs) are not perfect and suffer from "dark counts" (spurious detections without an incoming photon) and limited detection efficiency. These factors contribute to overall channel loss and increase the bit-error rate.

- Bit Flip Errors: Transmission noise and measurement errors are inherent in quantum communication, leading to bit flips. These errors can cause the protocol to fail during critical verification steps (e.g., Alice's test in steps 3-5 of the QOT protocol) or prevent Bob from successfully decoding the intended message in step 9. The total failure probability is bounded by $P_{failed} \leq P_{fpass} + P_{fcorrect}$.

- Optical Attenuation: As demonstrated in the experiments, increasing optical attenuation (channel loss) directly reduces the number of detected photons, which in turn lowers the effective code rate (throughput) of the QOT protocol. This severely limits the practical distance and speed over which the protocol can operate.

-

Adversarial Capabilities and Security Requirements:

- Unbounded Quantum Memory: Unlike previous QOT protocols, this work explicitly does not assume that an adversary has noisy or bounded quantum memory. This means the protocol must be secure even if a malicious Bob possesses perfect, long-lived quantum memory, allowing him to store received qubits indefinitely and delay his measurements until Alice reveals her bases. This is a significantly stronger security requirement.

- Photon Number Splitting (PNS) Attacks: A malicious Bob could attempt a PNS attack by exploiting multi-photon emissions from Alice's weak coherent source. He could measure one photon immediately and store the others in quantum memory, then measure the stored photons after Alice announces her measurement bases, thereby learning information he is not supposed to. The protocol must include mechanisms (like decoy-state QKD) to detect and prevent such attacks.

- Circumventing Bit Commitment: A malicious Bob might try to cheat the bit commitment scheme by delaying his measurements until Alice reveals the correct basis. This would allow him to learn both of Alice's messages ($m_0$ and $m_1$). The protocol must ensure that the computational cost for Bob to bypass the commitment verifications (steps 3-5) is astronomically high, making such an attack practically infeasible. The paper calculates this "cheating cost" to be approximately $3.8 \times 10^{12}$, implying an attacker would need about 120,000 years to successfully cheat once if each protocol run takes one second.

-

Computational and Data-Driven Constraints:

- Error Correction Overhead: To counteract bit flip errors, classical error correction codes (e.g., BCH codes) are necessary. This introduces computational overhead for encoding and decoding, and requires careful management of the error rate; if the error rate exceeds a certain threshold, the protocol must abort.

- Privacy Amplification: To ensure that Bob gains negligible information about the message he did not select, privacy amplification using universal hash functions is applied. This adds another layer of computational processing.

- Scalability for Real-World Applications: The protocol needs to be scalable to handle large datasets, such as $10^5$ elements per party in the Private Set Intersection (PSI) application. While the paper demonstrates practical communication costs (e.g., 20MB for $10^5$ elements) and runtimes (below 0.4 seconds on a 1Gbps network), scaling to even larger datasets or more complex MPC functions remains a continuous challenge.

- Integration Complexity: The QOT protocol is not a standalone solution but must be integrated with other classical cryptographic primitives (like Oblivious Pseudorandom Function, OPRF, and hash functions for bit commitment) and classical communication channels, adding to the overall system design and implementation complexity.

Why This Approach

The Inevitability of the Choice

The adoption of this specific quantum oblivious transfer (QOT) protocol, enhanced with a bit commitment scheme, was not merely a preference but an inevitability given the stringent security requirements of secure multiparty computation (MPC) in a quantum-threatened landscape. The authors recognized that traditional "state-of-the-art" (SOTA) methods, primarily classical oblivious transfer (OT) protocols, were fundamentally insufficient. Classical OT relies on computational hardness assumptions, such as the difficulty of solving the RSA problem or discrete logarithm problems. However, these assumptions are known to be vulnerable to quantum attacks, specifically Shor's algorithm, which can efficiently break these cryptographic foundations. This vulnerability renders classical OT unsuitable for applications demanding long-term security against quantum adversaries.

Furthermore, even prior QOT protocols, which attempted to leverage quantum mechanics for security, were found wanting. Many of these earlier quantum approaches operated under the "noisy storage model," assuming that an adversary's quantum memory would be inherently noisy or limited. The authors explicitly state that these protocols "become vulnerable when large and reliable quantum memory becomes available" [17]. The exact moment of realization that these methods were insufficient likely stemmed from the understanding that relying on limitations of an adversary's technology (like noisy quantum memory) is a weak security posture. A truly robust solution must guarantee security even against an adversary with advanced, reliable quantum memory. Therefore, a QOT protocol that does not make such restrictive assumptions, but instead builds security on stronger cryptographic primitives like bit commitment, became the only viable path forward for achieving unconditional security against any classical or quantum attacks.

Comparative Superiority

This QOT protocol demonstrates qualitative superiority over previous gold standards by fundamentally altering the security model. Unlike earlier QOT protocols that relied on the "noisy storage model," this approach does not restrict the adversary's technological capabilities. Instead, it employs a bit commitment scheme to guarantee security against delayed-measurement attacks, even if the adversary possesses large and reliable quantum memory. This is a critical structural advantage, moving from security based on an adversary's limitations to security based on cryptographic primitives.

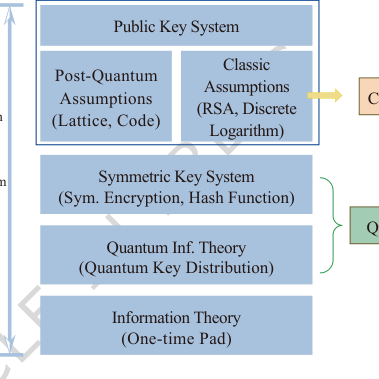

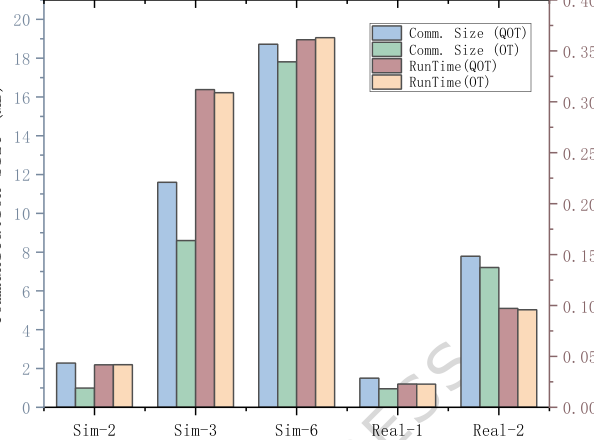

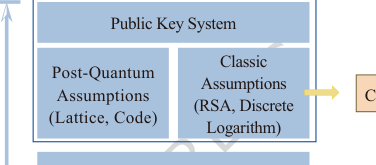

The security of this protocol is rooted in "quantum-secure bit commitment schemes," which can be constructed from collision-resistant hash functions. As detailed in Table 2 and Figure 5 of the paper, this places the protocol's security on "unstructured" symmetric-key problems, which are considered more foundational and rely on weaker cryptographic assumptions compared to the "structured" problems (like lattice-based or discrete logarithm) underpinning classical or even post-quantum OT. This makes the method overwhelmingly superior in terms of its foundational security guarantees, offering robustness against future advances that might break structured problems.

Quantitatively, the paper demonstrates the immense cost for a malicious Bob to circumvent the bit commitment scheme and steal both of Alice's messages. The calculated "cheating cost" is approximately $3.8 \times 10^{12}$ (Equation 6). To provide an intuitive understanding, if one run of the protocol takes one second, Bob would need roughly 120,000 years on average to successfully cheat just once. This makes the probability of cheating "essentially negligible in any realistic deployment," a level of security far beyond what classical or noisy-storage-model QOT protocols can offer. This structural advantage, based on strong, verifiable cryptographic primitives rather than technological assumptions, is what makes it qualitatively superior.

Alignment with Constraints

The chosen QOT protocol, augmented with a bit commitment scheme, perfectly aligns with the harsh requirements typically defined for secure multiparty computation (MPC) problems. Assuming the constraints from Step 2 included the need for strong privacy, security against quantum adversaries, and practical experimental feasibility, this solution forms a perfect "marriage" with these demands.

- Privacy Preservation: The core of MPC, and specifically Private Set Intersection (PSI), is to enable collaborative computation without revealing individual private data. The QOT protocol inherently achieves this by allowing a sender to transmit data such that the receiver learns only the selected piece, while the sender remains unaware of the choice (Figure 1). The application to PSI, where two banks identify common suspicious accounts "without disclosing any other data," directly fulfills this privacy constraint.

- Quantum Security: A paramount requirement in modern cryptography is resilience against quantum attacks. Classical OT protocols fail here due to their reliance on assumptions vulnerable to Shor's algorithm. Previous QOT protocols in the noisy storage model are also inadequate against adversaries with advanced quantum memory. This protocol, however, is explicitly designed to be "secure against any classical or quantum attacks with polynomial time complexity" (Page 4, Section 2.1), and crucially, "does not rely on assumptions about noisy or limited quantum memory on the part of the adversary" (Page 9, Section 3). The integration of a bit commitment scheme and the use of a decoy-state QKD system (for photon splitting attack prevention) are unique properties that directly address and overcome the quantum threat constraint.

- Practical Experimental Feasibility: The paper is an experimental implementation of QOT, demonstrating its "first practical application" to a real-world problem (PSI). The results show that the protocol is scalable for set sizes up to $10^5$ elements, with communication costs (e.g., 20MB for $10^5$ elements) and runtimes (below 0.4 seconds on a 1Gbps network) that are "already practical for many real-world applications" (Page 8, Section 2.3). This direct experimental validation and demonstrated scalability confirm its alignment with the practical feasibility constraint.

Rejection of Alternatives

The paper clearly articulates the reasons for rejecting alternative approaches, particularly classical OT and previous QOT protocols based on the noisy storage model.

- Classical Oblivious Transfer (OT): The primary reason for rejecting classical OT is its inherent vulnerability to quantum attacks. The authors state that the security of classical OT protocols is "based on the conjectured computational hardness assumptions [7, 8], such as the RSA problem and discrete logarithm, which are vulnerable to quantum attacks using Shor's algorithm" (Page 3, Section 1). For applications requiring long-term security in a post-quantum era, classical OT is simply not viable.

- Previous Quantum Oblivious Transfer (QOT) Protocols (Noisy Storage Model): While an advancement over classical methods, earlier QOT protocols, extensively studied in the "noisy storage model" [13-15], are also deemed insufficient. The paper explicitly notes that "this protocol becomes vulnerable when large and reliable quantum memory becomes available" [17] (Page 3, Section 1). The authors' protocol is designed to "surpass previous approaches only secure in the noisy storage model" (Page 2, Abstract) by not assuming limitations on an adversary's quantum memory. The key difference is the mandatory commitment of Bob's measurement bases via a bit commitment scheme, which prevents delayed-measurement attacks that would otherwise compromise security if an adversary had perfect quantum memory (Page 4, Section 2.1; Page 12). This rejection is based on a stronger adversarial model, where the adversary is not limited by noisy or bounded quantum memory.

The paper does not discuss other popular machine learning or data processing approaches like GANs, Diffusion models, or Transformers, as these are not relevant alternatives for the fundamental cryptographic primitive of oblivious transfer or secure multiparty computation. The experiment presented here is a foundational step for quantum-secure MPC.

Figure 5. Hierarchy of cryptographic assumptions

Figure 5. Hierarchy of cryptographic assumptions

Mathematical & Logical Mechanism

The Master Equation

The core mathematical engine of this paper, particularly regarding its security claims, is encapsulated in the calculation of the minimum expected cost for a malicious Bob to successfully cheat the protocol. This value, denoted as $cost_{cheat}$, represents the adversary's optimal strategy to circumvent the security mechanisms. It is an optimization problem that quantifies the robustness of the Quantum Oblivious Transfer (QOT) protocol against a specific type of attack.

$$ cost_{cheat} = \min_{0 \le s_1 \le n, 0 \le s_2 \le 2(N-t)-s_1} \frac{2^{s_2}}{P_{bypass, s_1} \cdot P_{cheat, s_1, s_2}} $$

While this equation defines the ultimate security metric, its components, $P_{bypass, s_1}$ and $P_{cheat, s_1, s_2}$, are themselves complex probabilities that rely on other fundamental calculations like $P_{fpass}$ and $P_{fcorrect}$ for honest party correctness, and $Pr[check\ passed]$ for commitment verification. These interconnected equations collectively form the mathematical backbone for evaluating the protocol's integrity.

Term-by-Term Autopsy

Let's dissect the master equation and its underlying components, explaining each variable, coefficient, and mathematical operator. The parameters used in the experimental implementation are $\lambda = 256$ (message length), $n = 2044$ (number of single photon states), $\alpha = 1/2$ (ratio of test bits), $\beta = 0.95$ (minimum acceptable bit matching ratio), $N = 511$ (error correction code length), and $t = 30$ (maximum correctable bit flip errors).

-

$\min_{0 \le s_1 \le n, 0 \le s_2 \le 2(N-t)-s_1}$: This is the minimization operator.

- Mathematical definition: It finds the smallest value of the expression that follows, by iterating through all possible valid combinations of $s_1$ and $s_2$.

- Physical/logical role: This represents the malicious Bob's optimal strategy. Bob, as an adversary, will choose the number of qubits to delay ($s_1$) and the number of bits to guess ($s_2$) in a way that minimizes his "cost" to cheat. By finding this minimum, the protocol designers ensure that even the most efficient cheating strategy is prohibitively expensive.

- Why minimization?: The goal is to establish the worst-case scenario for the protocol's security. If cheating is expensive even under the adversary's optimal strategy, the protocol is robust.

-

$s_1$: Number of qubits Bob delays measurement for.

- Mathematical definition: An integer variable, $0 \le s_1 \le n$.

- Physical/logical role: This is a strategic choice by a malicious Bob. He might delay measuring some qubits, hoping to learn Alice's encoding bases later (in step 6 of the protocol) and thus gain more information than allowed.

-

$s_2$: Number of bits Bob guesses.

- Mathematical definition: An integer variable, $0 \le s_2 \le 2(N-t)-s_1$.

- Physical/logical role: Another strategic choice by a malicious Bob. After delaying measurements and potentially gaining some information, he might still need to guess additional bits to fully reconstruct both of Alice's messages. The upper bound $2(N-t)-s_1$ reflects the total information needed for two messages, considering error correction capabilities and already acquired bits.

-

$2^{s_2}$: Computational cost of guessing $s_2$ bits.

- Mathematical definition: An exponential term.

- Physical/logical role: This quantifies the computational effort Bob must expend if he has to guess $s_2$ bits. Each bit has two possibilities, so $s_2$ bits have $2^{s_2}$ combinations. This term acts as a "penalty" for Bob's guessing strategy.

- Why exponentiation?: Guessing is a multiplicative process in terms of possibilities. If you guess one bit, there are 2 options. If you guess two, $2 \times 2 = 4$ options, and so on.

-

$P_{bypass, s_1}$: Probability that Bob bypasses the initial commitment check by delaying $s_1$ measurements.

- Mathematical definition: Defined by Equation 3 in the paper:

$$ P_{bypass, s_1} = \sum_{i=0}^{s_1} \binom{s_1}{i} \binom{n-s_1}{\alpha n - i} \text{Pr[check passed]} $$ - Physical/logical role: This is the probability that a malicious Bob successfully deceives Alice during the crucial commitment test phase (steps 3-5 of the protocol). Bob attempts to hide his true measurements for $s_1$ qubits, but still needs to pass Alice's verification test.

- Terms within $P_{bypass, s_1}$:

- $\sum$: Summation, used because Bob can pass the check through various combinations of how the test bits fall into his delayed vs. immediately measured sets.

- $i$: Index of summation, representing the number of "lucky" bits among the $s_1$ delayed qubits that happen to be part of the test set and contribute to passing the check.

- $\binom{s_1}{i}$: Number of ways to choose $i$ bits from the $s_1$ delayed qubits.

- $\binom{n-s_1}{\alpha n - i}$: Number of ways to choose the remaining $\alpha n - i$ bits for the test set from the $n-s_1$ qubits Bob measured immediately.

- $\text{Pr[check passed]}$: The probability that Alice's test (step 4) passes, given a certain number of matching bits. This is a conditional probability defined by Equation 4 in the paper:

$$ \text{Pr[check passed]} = \begin{cases} 1 & \text{if } \alpha n - i \ge \beta \alpha n \\ \sum_{j=\lceil i-(1-\beta)\alpha n \rceil}^{\alpha n - i} \binom{\alpha n - i}{j} \frac{1}{2^{\alpha n - i}} & \text{otherwise} \end{cases} $$- Physical/logical role: This term ensures that even if Bob attempts to cheat, he must still satisfy Alice's verification criteria. If the number of correct bits ($\alpha n - i$) in the test set is already above the threshold ($\beta \alpha n$), the check passes with probability 1. Otherwise, Bob must rely on guessing (with probability $1/2^{\alpha n - i}$) to make up the difference, summing over $j$ successful guesses. The $\lceil \dots \rceil$ ensures an integer count for the minimum required guesses.

- Mathematical definition: Defined by Equation 3 in the paper:

-

$P_{cheat, s_1, s_2}$: Probability that Bob successfully reconstructs both messages given $s_1$ delayed measurements and $s_2$ guesses.

- Mathematical definition: Defined by Equation 5 in the paper:

$$ P_{cheat, s_1, s_2} = \frac{1}{2^{(1-\alpha)n}} \sum_{i=2(N-t)-s_1-s_2}^{(1-\alpha)n} \binom{(1-\alpha)n}{i} $$ - Physical/logical role: This is the probability of Bob succeeding in the second stage of his cheating strategy, after having bypassed the commitment check. It quantifies the likelihood of him obtaining enough information to decode both $m_0$ and $m_1$.

- Terms within $P_{cheat, s_1, s_2}$:

- $(1-\alpha)n$: The number of bits in the non-test set, which are the actual message-carrying bits.

- $2(N-t)-s_1-s_2$: The minimum number of "correct" bits Bob needs from the $(1-\alpha)n$ non-test bits to successfully decode both messages, considering the $s_1$ bits he delayed and $s_2$ bits he guessed. This threshold is derived from the error correction requirement of $N-t$ matching bits for each of the two messages.

- $\binom{(1-\alpha)n}{i}$: Number of ways to choose $i$ correct bits from the $(1-\alpha)n$ non-test bits.

- $1/2^{(1-\alpha)n}$: This factor implies that for the $(1-\alpha)n$ bits, Bob has a $1/2$ chance of guessing each bit correctly if he doesn't have the correct basis. It normalizes the sum, representing the probability of a specific outcome sequence.

- Mathematical definition: Defined by Equation 5 in the paper:

-

$P_{bypass, s_1} \cdot P_{cheat, s_1, s_2}$: The overall probability of Bob successfully cheating using a specific strategy $(s_1, s_2)$.

- Mathematical definition: A product of two probabilities.

- Physical/logical role: These two events (bypassing the commitment and then successfully reconstructing messages) are sequential and conditionally independent. The multiplication gives the total probability of a successful cheat attempt.

- Why multiplication?: For independent events, the probability of both occurring is the product of their individual probabilities.

-

$\frac{2^{s_2}}{P_{bypass, s_1} \cdot P_{cheat, s_1, s_2}}$: The expected cost.

- Mathematical definition: A ratio.

- Physical/logical role: This term represents the expected number of "cost units" (e.g., computational operations for guessing) Bob needs per successful cheat attempt. If an event has probability $p$, the expected number of trials until success is $1/p$. Here, $2^{s_2}$ scales this by the guessing effort.

- Why division?: It's a standard way to calculate expected cost or number of attempts: total cost (or effort) divided by the probability of success.

It's worth noting that the paper also defines $P_{fpass}$ (Eq. 1) and $P_{fcorrect}$ (Eq. 2) for the correctness of the protocol for honest parties. These are crucial for ensuring the protocol works as intended without errors or false aborts.

- $P_{fpass} = \sum_{i=0}^{\beta \alpha n} \binom{\alpha n}{i} p_e^i (1-p_e)^{\alpha n - i}$: Probability of false failure to pass Alice's test.

- Physical/logical role: This is the probability that honest Alice and Bob, due to random bit flip errors ($p_e$), fail the test in steps 3-5, leading to an unnecessary abort. This should be kept low.

- $P_{fcorrect} = 1 - \frac{1}{2^{n-\alpha n}} \sum_{i=N-t}^{n-\alpha n} \binom{n-\alpha n}{i} \left( \sum_{j=N-t}^{N} \binom{N}{j} p_e^j (1-p_e)^{N-j} \right) \left( \sum_{k=N-t-j}^{N-i} \binom{N-i}{k} \frac{1}{2^{N-i}} \right)$: Probability of failure of error correction in step 9.

- Physical/logical role: This is the probability that, for honest parties, the error correction fails, preventing Bob from correctly decoding his chosen message. This also needs to be very low for the protocol to be practical. To be honest, I’m not completely sure about the precise interpretation of the indices and the $1/2^{N-i}$ term in the context of error correction failure as presented in the paper's formula, but it generally represents the chance of not having enough matching bits for successful decoding across the message segments.

Step-by-Step Flow

Let's trace the journey of a single abstract data point, say Alice's message bit $m_c$ (where $c$ is Bob's choice), through the Quantum Oblivious Transfer (QOT) protocol. Imagine this as a sophisticated assembly line for secure information.

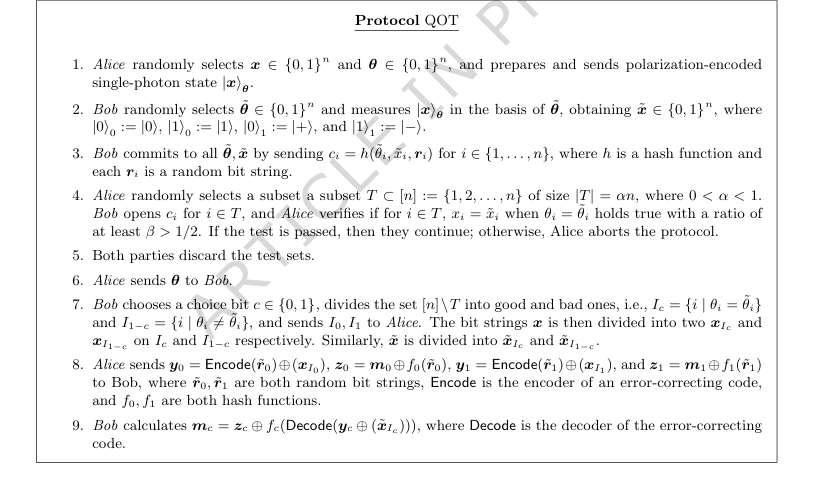

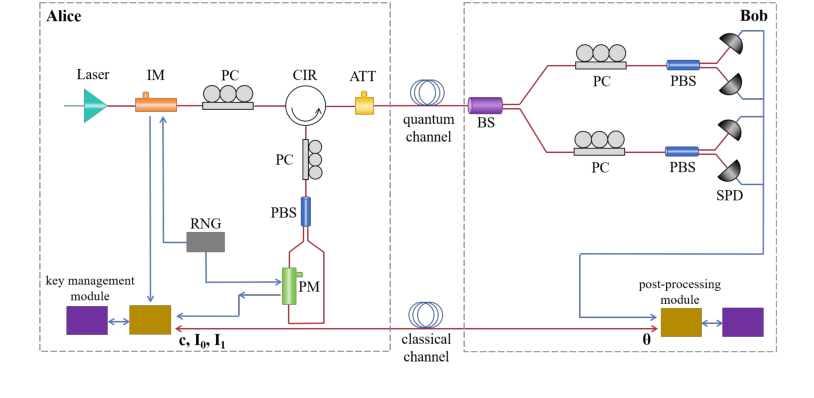

Figure 2. Quantum Oblivious Transfer Protocol

Figure 2. Quantum Oblivious Transfer Protocol

-

Quantum State Preparation (Alice): For each round $i$, Alice starts with a secret bit $x_i$ and a random basis $\theta_i$. She prepares a single-photon quantum state, $|x_i\rangle_{\theta_i}$, which is a tiny packet of light (a photon) whose polarization (e.g., horizontal/vertical or diagonal) encodes $x_i$ in the $\theta_i$ basis. This is the raw material entering the system.

-

Quantum Measurement (Bob): This photon travels through a quantum channel to Bob. Bob, without knowing Alice's $\theta_i$, randomly chooses his own measurement basis $\tilde{\theta}_i$. He then measures the incoming photon, collapsing its quantum state and yielding a classical bit $\tilde{x}_i$. If Bob's $\tilde{\theta}_i$ happens to match Alice's $\theta_i$, then $\tilde{x}_i$ will almost certainly be equal to $x_i$. If they don't match, $\tilde{x}_i$ will be random (0 or 1 with 50% probability). This is the first transformation of the data point from quantum to classical, and it's where Bob's "obliviousness" begins.

-

Commitment (Bob): Before Alice reveals anything about her bases, Bob must "commit" to his measurement choices and outcomes. For each round $i$, he takes his measured bit $\tilde{x}_i$, his chosen basis $\tilde{\theta}_i$, and a random string $r_i$, and feeds them into a cryptographic hash function $h$. The output, $c_i = h(\tilde{\theta}_i, \tilde{x}_i, r_i)$, is a digital fingerprint. He sends this $c_i$ to Alice. This commitment acts like sealing his choices in a tamper-proof envelope, preventing him from changing his mind later.

-

Verification Test (Alice & Bob): Alice randomly selects a subset of these commitments (the "test set" $T$). She asks Bob to "open" these specific envelopes. Bob reveals the original contents $(\tilde{\theta}_i, \tilde{x}_i, r_i)$ for $i \in T$. Alice re-calculates the hash $h(\tilde{\theta}_i, \tilde{x}_i, r_i)$ and checks if it matches the $c_i$ Bob sent earlier. She also checks if the quantum channel was reliable by comparing $x_i$ and $\tilde{x}_i$ for rounds where $\theta_i = \tilde{\theta}_i$. If too many errors are found, or if Bob's commitments don't match, Alice aborts the protocol, and the data flow stops. This is a quality control and fraud detection step.

-

Discarding Test Data: The data points from the test set $T$ are discarded by both parties. They served their purpose for verification and are not used for the actual message transfer.

-

Basis Revelation (Alice): For all remaining rounds (not in $T$), Alice reveals her original encoding bases $\theta_i$ to Bob. This is a critical information release from Alice.

-

Data Partitioning (Bob): Now Bob knows which of his measurements were "good" (where $\theta_i = \tilde{\theta}_i$) and which were "bad" (where $\theta_i \neq \tilde{\theta}_i$). Based on his secret choice bit $c$, he forms two sets: $I_c$ (containing indices where $\theta_i = \tilde{\theta}_i$) and $I_{1-c}$ (containing indices where $\theta_i \neq \tilde{\theta}_i$). He sends the labels of these sets ($I_0$ and $I_1$) to Alice, but Alice still doesn't know which one corresponds to Bob's choice $c$. This is where Bob's choice begins to shape the data path.

-

Message Encoding (Alice): Alice has two messages, $m_0$ and $m_1$. She generates two random bit strings, $r_0$ and $r_1$. She then uses an error-correcting code (Encode) and hash functions ($f_0, f_1$) to prepare two masked data packets:

- $y_0 = \text{Encode}(r_0) \oplus x_{I_c}$ (where $x_{I_c}$ are Alice's bits corresponding to Bob's "good" measurements)

- $y_1 = \text{Encode}(r_1) \oplus x_{I_{1-c}}$ (where $x_{I_{1-c}}$ are Alice's bits corresponding to Bob's "bad" measurements)

She also prepares $z_0 = m_0 \oplus f_0(r_0)$ and $z_1 = m_1 \oplus f_1(r_1)$. She sends $y_0, y_1, z_0, z_1$ to Bob. This is where Alice's messages are prepared for transfer, using the shared randomness and error correction.

-

Message Decoding (Bob): Bob, knowing his choice bit $c$, selects the corresponding $y_c$ and uses his own "good" measured bits $\tilde{x}_{I_c}$ to recover $r_c = \text{Decode}(y_c \oplus \tilde{x}_{I_c})$. The error-correcting code helps him recover $r_c$ even if some bit flips occurred. Finally, he uses $r_c$ to unmask his chosen message: $m_c = z_c \oplus f_c(r_c)$. He cannot recover $r_{1-c}$ or $m_{1-c}$ because he doesn't have the correct $\tilde{x}_{I_{1-c}}$ (due to the mismatched bases, which means $\tilde{x}_{I_{1-c}}$ is essentially random noise). This is the final stage where Bob receives his chosen message, and the other message remains hidden from him.

This entire process ensures that Bob gets exactly one message, and Alice learns nothing about Bob's choice, making the transfer "oblivious" and "secure".

Optimization Dynamics

The QOT protocol, unlike a machine learning algorithm, does not "learn" or "update" its internal parameters iteratively through gradients or a loss function in the traditional sense. Instead, its "optimization dynamics" are primarily concerned with:

-

Pre-Protocol Parameter Optimization: Before the protocol even begins, Alice and Bob (or the system designers) must carefully choose various parameters to ensure both correctness and security.

- Correctness Parameters: Parameters like $\alpha$ (ratio of test bits), $\beta$ (minimum acceptable bit matching ratio), $N$ (error correction code length), and $t$ (maximum correctable errors) are selected to minimize the probabilities of honest failure, $P_{fpass}$ and $P_{fcorrect}$. For instance, Alice sets a range for Bob's photon missing ratio and an $\epsilon$ threshold for test pass probability to ensure the protocol proceeds reliably for honest parties.

- Security Parameters: The choice of $n$ (total rounds), the strength of the hash function $h$, and the error-correcting code are all designed to maximize the $cost_{cheat}$ for any malicious Bob. The paper also mentions optimizing the mean photon numbers ($\mu, \nu, 0$) and their probabilities ($P_1, P_2, 1-P_1-P_2$) in the decoy-state BB84 protocol to prevent photon number splitting attacks, which is a form of pre-protocol optimization for quantum security.

-

Adversarial Optimization Landscape: The $cost_{cheat}$ equation itself represents an optimization problem faced by a malicious Bob. Bob's "learning" or "optimization" is to find the values of $s_1$ (delayed measurements) and $s_2$ (guessed bits) that minimize the cost of cheating. The protocol is designed such that this minimum cost is astronomically high (e.g., $3.8 \times 10^{12}$), effectively shaping an "adversarial landscape" where all paths to cheating are extremely arduous. The protocol's security relies on the fact that no matter how cleverly Bob optimizes his strategy, the cost remains prohibitive.

-

Dynamic Abort Mechanism: The protocol incorporates a crucial dynamic element: the abort condition in Step 4. If the error rate in the test phase (due to noise or Bob's malicious actions) exceeds a predefined threshold (dictated by $\beta$), the protocol immediately aborts. This prevents the protocol from proceeding in an insecure state or when the channel quality is too poor. This is a real-time "state update" that halts execution if security or correctness cannot be guaranteed.

-

Error Correction as Data Update: In Step 9, Bob uses an error-correcting code to recover $r_c$. This mechanism dynamically "updates" the potentially corrupted bit string $r_c$ by correcting bit flips up to the code's capability $t$. This ensures that noise in the quantum channel does not compromise the integrity of the transferred information, allowing the protocol to converge to the correct message $m_c$.

In essence, the "optimization dynamics" here are less about continuous learning and more about a robust design with pre-calculated parameters, an adversary's calculated optimal attack strategy, and built-in safeguards that ensure the protocol either succeeds securely or fails safely.

Figure 3. Schematic of experimental setup

Figure 3. Schematic of experimental setup

Results, Limitations & Conclusion

Experimental Design & Baselines

To rigorously validate their quantum oblivious transfer (QOT) protocol, the authors embarked on a comprehensive experimental setup. The core of their design involved implementing a 1-out-of-2 QOT protocol, which was then applied to solve a real-world Private Set Intersection (PSI) problem. Unlike prior QOT protocols that often relied on the noisy storage model, this work distinguished itself by integrating a bit commitment scheme, thereby bolstering its theoretical security against quantum attacks.

The physical implementation of Alice's quantum device utilized a weak coherent laser source combined with the decoy state method. This architecture was specifically chosen to counteract photon number splitting (PNS) attacks, a common vulnerability in practical quantum communication. Alice's setup included an intensity modulator (IM) to generate signal, decoy, and vacuum states, and a polarization encoding module comprising a polarization controller (PC), a polarization beam splitter (PBS), and a phase modulator (PM). Bob, on the receiving end, employed a beam splitter (BS) to direct photons for measurement in either the Z or X basis, followed by PCs, PBSs, and single-photon detectors (SPDs) for projective measurements.

For the practical application, the QOT protocol was seamlessly integrated into an optimized Oblivious Pseudorandom Function (OPRF) based PSI protocol, as detailed in reference [32]. The "victims" (baselines) in this experimental validation were classical OT-based PSI implementations. The experiments were conducted using both simulated and real-world datasets. The real-world scenario involved two financial institutions: one providing a blacklist of suspicious accounts and the other supplying a list of active client accounts. The objective was to securely identify common suspicious accounts without either bank revealing any other private client information. This setup was meticulously designed to provide definitive, undeniable evidence that their quantum-secure mechanism could function effectively and practically, directly addressing a pressing need for privacy-preserving computation in sensitive domains like finance.

What the Evidence Proves

The evidence presented in this paper robustly demonstrates the functionality, security, and practical applicability of their QOT protocol. First and foremost, the experiments confirm the successful operation of the QOT protocol itself, showcasing its ability to perform oblivious transfer in a quantum-secure manner.

A critical aspect of their validation was the rigorous analysis of the protocol's security against malicious adversaries. The authors quantified the "cheating cost" for a dishonest Bob attempting to bypass the bit commitment scheme and acquire both of Alice's messages. This cost, defined as the expected time for a successful cheating attempt, was calculated to be approximately $3.8 \times 10^{12}$. To put this into perspective, if a single execution of the protocol takes one second, a malicious Bob would, on average, require about 120,000 years to succeed in cheating just once. This staggering figure provides compelling, undeniable evidence that the core bit commitment mechanism effectively secures the protocol against quantum attacks, rendering cheating practically negligible in any real-world deployment. Furthermore, the use of the decoy-state BB84 protocol was experimentally shown to be effective against PNS attacks, with Alice being able to detect deviations in Bob's reported photon click statistics if he attempts such an attack.

Beyond the foundational QOT, the paper provides hard evidence of its scalability and practicality when applied to the Private Set Intersection (PSI) problem. Experiments with set sizes up to $10^5$ elements demonstrated that both communication cost and runtime scaled nearly linearly with the number of elements. For instance, processing $10^5$ elements per party resulted in a total communication of approximately 20MB and a runtime below 0.4 seconds on a 1Gbps network. These performance metrics are highly practical for many real-world applications, particularly in sectors like banking and fraud detection.

Figure 4. Comparison of communication cost and runtime between QOT-based and OT-based PSI experiments

Figure 4. Comparison of communication cost and runtime between QOT-based and OT-based PSI experiments

Crucially, when benchmarked against classical OT-based PSI, the QOT-based PSI incurred only a slightly higher communication overhead, while maintaining nearly identical runtimes. This is a profound finding, as it proves that the enhanced quantum security offered by their QOT protocol does not necessitate a significant performance penalty compared to its classical counterparts. The successful identification of common suspicious telecommunications fraud bank accounts between two simulated banks stands as a definitive real-world application, showcasing the commercial viability and experimental functionality of their QOT-based secure multiparty computation.

Limitations & Future Directions

While the experimental results are highly encouraging, demonstrating a robust and practical quantum-secure oblivious transfer protocol, it's important to acknowledge certain limitations and consider avenues for future development.

One practical limitation observed is the impact of optical attenuation on the protocol's performance. As channel loss increases, the number of detected photons decreases, consequently lowering the code rate (Table 1). While the information-theoretic security guarantees remain intact, this affects the overall practicality in terms of throughput and maximum transmission distance. Future research could explore more robust quantum channel implementations or advanced error correction techniques to mitigate the effects of high attenuation and extend the practical range of QOT.

Another point to consider is the protocol's reliance on a test phase (Step 4) where, if the bit-error rate becomes too high, the protocol aborts. While this ensures security, it implies a trade-off with reliability under extremely noisy conditions. Further work might investigate adaptive strategies or more resilient error-correction codes that allow the protocol to proceed even with higher error rates, perhaps by dynamically adjusting parameters or employing more sophisticated post-processing.

The paper itself points towards potential optimization methods, such as Oblivious Transfer (OT) extension techniques and the use of Beaver triples. OT extension allows for generating a large number of OTs from a small set of base OTs, significantly reducing the quantum resources required. Beaver triples, pre-generated correlated randomness, can enable MPC protocols to run more efficiently in the online phase by offloading complex computations to an offline phase. Implementing and experimentally validating these optimizations would be a natural next step to further reduce computation and communication costs, making the protocol even more scalable and efficient for larger datasets and more complex functions.

Looking forward, the demonstrated framework has broad implications beyond Private Set Intersection. The authors suggest its applicability to other secure multiparty computation problems, including privacy-preserving machine learning and anonymous voting systems. This opens up a rich field for future research, where the QOT protocol could serve as a foundational primitive for a new generation of quantum-secure MPC applications. Exploring these diverse applications would involve adapting the protocol to different computational tasks and evaluating its performance and security in those specific contexts.

Finally, the protocol's security relies on the existence of quantum-secure bit commitment, which is built upon collision-resistant hash functions—a weaker and more foundational cryptographic assumption compared to those underpinning classical or post-quantum OT. This is a strength, but it also invites further theoretical exploration into the minimal assumptions required for quantum cryptography and how these might evolve with advancements in quantum computing and cryptanalysis. A deeper comparative analysis of performance and security trade-offs between bit-commitment-based QOT and noisy-storage-model QOT, especially as quantum memory technology advances, would also be a valuable discussion point for the community. The current lack of public data availability due to confidentiality agreements is a practical limitation for independent verification and further research, suggesting a need for standardized, anonymized datasets for future benchmarking.

Table 2. Security assumptions for OT protocols

Table 2. Security assumptions for OT protocols

Table 1. Dependence of the QOT code rate on optical attenuation. The code rate is defined as the number of successfully transmitted qubits per second (kbps)

Table 1. Dependence of the QOT code rate on optical attenuation. The code rate is defined as the number of successfully transmitted qubits per second (kbps)