Improving Quantum Machine Learning via Heat-Bath Algorithmic Cooling

This work introduces an approach rooted in quantum thermodynamics to enhance sampling efficiency in quantum machine learning (QML).

Background & Academic Lineage

The Origin & Academic Lineage

The problem addressed in this paper originates from the intersection of quantum information processing (QIP) and data science, giving rise to the field of Quantum Machine Learning (QML). While QML holds immense promise for tackling complex data distributions beyond classical capabilities, it faces inherent challenges due to the probabilistic nature of quantum measurements. This probabilistic nature means that both the training and inference phases of QML algorithms are susceptible to "finite sampling errors" when extracting information from quantum states, such as expectation values of observables.

Historically, attempts to mitigate these sampling errors, like adapting Quantum Amplitude Estimation (QAE), offered quadratic improvements. However, QAE protocols demand multiple rounds of complex Grover-like operations, which are computationally intensive and severely limit their feasibility on current Noisy Intermediate-Scale Quantum (NISQ) devices. Furthermore, for many machine learning tasks, particularly classification, QAE provides an "excessive" level of precision. Often, it is sufficient to determine merely the sign of a measured statistic (e.g., whether a classification score is positive or negative) rather than its exact magnitude. This realization highlighted the need for more practical and efficient sampling reduction techniques that could surpass the quadratic improvements of QAE while being compatible with the limitations of NISQ hardware.

The fundamental limitation, or "pain point," of previous approaches that compelled the authors to develop this work is twofold. Firstly, existing methods like QAE are too resource-intensive and complex (requiring many Grover-like operations) for practical implementation on current NISQ hardware, making real-time application impossible. Secondly, conventional algorithmic cooling techniques, which inspired this work, are inherently "unidirectional." They are designed to increase the population of a predetermined basis state (e.g., $|0\rangle$), requiring prior knowledge of the desired output's sign. In supervised QML, however, the sign of the classification score (which determines the label or gradient direction) is precisely the unknown quantity we aim to determine. This lack of "bidirectional" capability in prior cooling methods meant they could not be directly applied to QML classification problems where the bias direction is initially unknown. This paper directly addresses these limitations by introducing a novel, sign-preserving, bidirectional cooling approach.

Intuitive Domain Terms

- Quantum Machine Learning (QML): Imagine a regular computer trying to learn patterns from data, like a student studying for an exam. QML is like giving that student a magical quantum brain that can process information in entirely new ways, allowing them to learn much faster or discover patterns that a regular student might miss, especially with very complex data.

- Noisy Intermediate-Scale Quantum (NISQ) devices: Think of NISQ devices as the first generation of electric cars. They are revolutionary and show incredible potential, but they have limited battery life, are somewhat unreliable, and can only perform certain tasks before needing to recharge or be fixed. They're not yet ready for long road trips, but they're a crucial step towards fully functional quantum vehicles.

- Heat-Bath Algorithmic Cooling (HBAC): Picture a messy desk where important documents are mixed with junk. HBAC is like a meticulous assistant who, in two steps, tidies up: first, they gather all the important documents into one neat pile and push the junk to another part of the desk (entropy compression). Second, they throw away the junk from the desk (thermalization/reset), leaving the important documents much more organized and accessible. This is a key soluton.

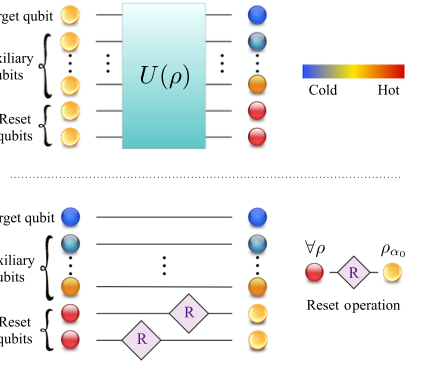

FIG. 1. A round of the standard heat-bath algorithmic cool- ing protocol. (a) Step 1. Unitary Step: Entropy compression. A global unitary operation U(ρ) acting on the target, auxiliary, and reset qubits coherently redistributes entropy across the entire reg- ister. This operation extracts entropy from the target and auxiliary qubits and concentrates it in the reset subsystem, thereby increas- ing the population of the target qubit toward its ground state (colder, blue states). (b) Step 2. Dissipative Step: Thermalization or Reset Operations. The reset qubits are refreshed through inter- action with a heat bath, removing the accumulated entropy. The illustration corresponds to the case of full thermalization, which is operationally equivalent to replacing the reset qubits with fresh qubits prepared in the bath state ρα0, illustrated here for the case of m = 2 reset qubits. Repeated iteration of these two steps con- stitutes the standard heat-bath algorithmic cooling protocol. The color gradient from blue to red indicates the polarization of the qubits, from colder (blue) to hotter (red) states

FIG. 1. A round of the standard heat-bath algorithmic cool- ing protocol. (a) Step 1. Unitary Step: Entropy compression. A global unitary operation U(ρ) acting on the target, auxiliary, and reset qubits coherently redistributes entropy across the entire reg- ister. This operation extracts entropy from the target and auxiliary qubits and concentrates it in the reset subsystem, thereby increas- ing the population of the target qubit toward its ground state (colder, blue states). (b) Step 2. Dissipative Step: Thermalization or Reset Operations. The reset qubits are refreshed through inter- action with a heat bath, removing the accumulated entropy. The illustration corresponds to the case of full thermalization, which is operationally equivalent to replacing the reset qubits with fresh qubits prepared in the bath state ρα0, illustrated here for the case of m = 2 reset qubits. Repeated iteration of these two steps con- stitutes the standard heat-bath algorithmic cooling protocol. The color gradient from blue to red indicates the polarization of the qubits, from colder (blue) to hotter (red) states

- Polarization (of a qubit): Imagine a compass needle. If it's perfectly balanced, it might point anywhere (low polarization). If it's strongly pulled towards North, it has high polarization towards North. For a quantum bit (qubit), polarization describes how strongly it favors being in one specific state (like '0') over another (like '1'). High polarization means a much clearer, more reliable 'yes' or 'no' answer from the qubit.

- Entropy Compression: Consider a group of people randomly scattered in a large hall (high entropy). Entropy compression is like a clever event organizer who, without removing anyone, guides all the VIPs to a designated, easily accessible area, while the rest of the attendees remain spread out. The overall number of people (information) in the hall is the same, but the "important" part is now much more concentrated and orderly.

Notation Table

| Notation | Description |

|---|---|

Problem Definition & Constraints

Core Problem Formulation & The Dilemma

The central problem addressed by this paper is the inherent finite sampling error in Quantum Machine Learning (QML) algorithms, particularly Variational Quantum Binary Classifiers (VQBCs). This error arises from the probabilistic nature of quantum measurements, which are fundamental to both the training and prediction phases of QML.

Input/Current State:

In the current state, QML algorithms process quantum data representations, and their output (e.g., a classification label or a gradient direction) is encoded in the sign of a measured statistic, specifically the Z-polarization $\alpha(x, \theta)$ of a target qubit. This polarization is derived from a single-qubit density operator $\rho_1(x, \theta) = \frac{I + \alpha(x, \theta)Z + \beta(x, \theta)X + \gamma(x, \theta)Y}{2}$, where the classification score is $q(x, \theta) = \alpha(x, \theta)$. To estimate this score, a finite number of measurement repetitions (shots), denoted by $k$, are performed. This finite $k$ leads to an estimation error. For robust prediction, the estimated expectation value $\mu$ must satisfy $|\mu - \langle M \rangle| < |\langle M \rangle|$, where $\langle M \rangle$ is the true expectation value. For training, the estimation error for $q(x_j, \theta)$ must be smaller than $|q(x_j, \theta) - b|$ to accurately assess hinge loss gradients. The error probabilities for these tasks are bounded from above, for instance, for prediction:

$$ \text{Pr[error]} = \text{Pr}[|\mu - \langle M \rangle| \geq |\langle M \rangle|] \leq \frac{1 - \alpha^2(x, \theta^*)}{k\alpha^2(x, \theta^*)} $$

and for training:

$$ \text{Pr[error]} = \frac{1 - \alpha^2(x_j, \theta)}{k(\alpha(x_j, \theta) - b)^2} $$

These bounds highlight that a smaller magnitude of $|\alpha(x, \theta)|$ necessitates a larger number of shots $k$ to maintain a low error probability. Crucially, the sign of $\alpha(x, \theta)$ is not known a priori in the QML context, as it represents the very information (label or gradient direction) that the algorithm aims to determine.

Desired Endpoint (Output/Goal State):

The desired endpoint is to significantly reduce these finite sampling errors, thereby minimizing the number of measurement repetitions $k$ required for accurate classification and gradient estimation. This is to be achieved by increasing the magnitude of the target qubit's polarization, $|\alpha(x, \theta)|$, to a new, enhanced value $|\alpha'(x, \theta)|$. The key requirement is that this enhancement must be bidirectional, meaning the sign of the polarization must be preserved while its magnitude is increased. The target transformation for the single-qubit density matrix is:

$$ \frac{I + \alpha Z}{2} \rightarrow \frac{I + \alpha' Z}{2} \quad \text{with} \quad \begin{cases} \alpha' > \alpha & \text{if } \alpha > 0 \\ \alpha' < \alpha & \text{if } \alpha < 0 \end{cases} $$

This process should enhance sample efficency in both training and prediction phases without relying on computationally expensive operations like Grover iterations or quantum phase estimation.

Missing Link & The Dilemma:

The exact missing link is an efficent and sign-preserving mechanism to amplify the polarization magnitude of a target qubit in a QML context. Previous algorithmic cooling techniques, such as Heat-Bath Algorithmic Cooling (HBAC), are "unidirectional"; they are designed to increase the population of a predetermined basis state (e.g., $|0\rangle$). This requires prior knowledge of the desired output (i.e., the sign of $\alpha(x, \theta)$), which is precisely the unknown information in QML classification. This creates a painful dilema: to use conventional cooling to improve accuracy, one would need to know the answer beforehand, rendering the cooling process moot for classification. The paper attempts to bridge this gap by developing a novel "bidirectional" cooling protocol that works irrespective of the initial sign of polarization.

Constraints & Failure Modes

The problem of enhancing QML sampling efficiency is made insanely difficult by several harsh, realistic constraints:

1. Computational & Hardware Constraints:

* NISQ Device Limitations: The solution must be compatible with Noisy Intermediate-Scale Quantum (NISQ) devices. This means avoiding complex, deep circuits and operations like Grover iterations or quantum phase estimation, which are infeasible due to limited qubit counts, short coherence times, and high error rates.

* Computational Overhead: Any proposed method must minimize the computational overhead associated with estimating classification scores and gradients. Increasing the number of measurement shots ($k$) to reduce error is a direct trade-off with computational cost.

* Qubit Resource Limits: The number of available qubits is limited on current hardware. The protocol must be resource-efficent, ideally by recycling qubits to reduce the total number of qubits required.

2. Data-Driven & Algorithmic Constraints:

* Unknown Polarization Sign (Bidirectionality Requirement): As highlighted in the core problem, the sign of the target qubit's polarization $\alpha(x, \theta)$ is unknown a priori. This is a fundamental constraint that renders conventional, unidirectional algorithmic cooling protocols unsuitable. The cooling mechanism must operate correctly for both positive and negative initial polarizations without prior knowledge of which is which.

* Finite Sampling Errors: The inherent probabilistic nature of quantum measurements means that any estimation based on a finite number of shots will always have some error. The goal is to reduce this error, not eliminate it entirely, within practical limits.

* Barren Plateaus: While not directly solved by this paper, the issue of barren plateaus in variational quantum algorithms (where gradients vanish exponentially with the number of qubits) is a known challenge in QML. The paper notes its approach is an independent mechanism that can be used in conjunction with existing barren plateau mitigation strategies.

3. Physical Noise Constraints:

* Noisy Quantum Environment: Current quantum hardware is susceptible to various forms of noise and decoherence. The protocol must be robust against these imperfections.

* Specific Noise Models: The paper specifically considers generalized amplitude damping (GAD) and depolarizing channels, which are dominant noise mechanisms in NISQ devices. The protocol's design, which operates primarily within the subspace of diagonal states, must ensure resilience to coherence-degrading noise (e.g., dephasing, phase-damping, control-phase fluctuations) that do not affect diagonal elements.

Failure Modes:

If these constraints are not met, or the problem is not adequately solved, the QML algorithms would face several failure modes:

* Inaccurate Classification: High sampling errors would lead to unreliable predictions, where the estimated label might differ from the true label.

* Ineffective Training: Incorrect gradient estimations due to high sampling errors would hinder the optimization process, preventing the model from converging to an optimal solution.

* Prohibitive Resource Consumption: Relying on an excessively large number of measurement shots or complex quantum operations would make QML impractical and unscalable for real-world applications on current and near-term quantum hardware.

* Non-applicability of Cooling: If cooling protocols cannot operate bidirectionally without prior knowledge of the polarization sign, they cannot be effectively integrated into QML classification tasks where this sign is the unknown output.

* Susceptibility to Noise: Protocols that are not robust to the noise characteristics of NISQ devices would yield inconsistent and unreliable results, negating any theoretical performance gains. The paper specifically tests its protocols under realistic NISQ noise conditions, including typical and worst-case regimes, to demonstrate robustness against noise occuring in real hardware.

Why This Approach

The Inevitability of the Choice

The core challenge in quantum machine learning (QML) algorithms, particularly for classification tasks, stems from the probabilistic nature of quantum measurements. Both training and inference phases necessitate extracting information from probability distributions, which inherently introduces finite sampling errors. This limitation directly impacts the reliability of classification scores and gradients, demanding a substantial number of repetitions (shots) to achieve accurate results.

Traditional state-of-the-art (SOTA) quantum methods, such as Quantum Amplitude Estimation (QAE), were deemed insufficient for this specific problem. While QAE theoretically offers a quadratic reduction in sampling errors, its reliance on complex Grover-like operations [13,14] renders it largely impractical and infeasible for Noisy Intermediate-Scale Quantum (NISQ) devices [15,16]. Furthermore, QAE provides an overly precise estimation of magnitude, which is often excessive for classification. For binary classification, only the sign of a measured statistic (polarization) is required, not its exact magnitude.

The authors realized that the critical need was to increase the magnitude of the classification score's polarization, $|\alpha(x, \theta)|$, to minimize the required number of measurements. However, this increase could not be achieved by a simple locally unitary process on the single measured qubit, as such an operation would alter the purity of its state. This led to the consideration of algorithmic cooling techniques.

The exact moment the authors identified the insufficiency of existing methods was when they recognized that conventional algorithmic cooling protocols, which aim to increase the population of a predetermined basis state, were fundamentally unsuitable. In QML classification, the sign of $\alpha(x, \theta)$ (which determines the class label or gradient direction) is unknown a priori. Therefore, any viable solution must be a bidirectional protocol capable of dynamically transforming the single-qubit density matrix to increase polarization magnitude while preserving its unknown sign, as expressed by the transformation:

$$ \frac{I + \alpha Z}{2} \rightarrow \frac{I + \alpha' Z}{2} \quad \text{with} \quad \begin{cases} \alpha' > \alpha & \text{if } \alpha > 0 \\ \alpha' < \alpha & \text{if } \alpha < 0 \end{cases} $$

This requirement for sign-preserving, bidirectional polarization enhancement made the proposed quantum thermodynamic cooling approach the only viable solution.

Comparative Superiority

This quantum thermodynamic approach, reframing supervised learning as a cooling process, offers qualitative superiority beyond mere performance metrics. Its structural advantages make it overwhelmingly superior to previous gold standards in several key aspects:

-

Reduced Computational Overhead: The method significantly reduces the number of measurements required to estimate classification scores and gradients. By increasing the polarization of the target qubit, fewer shots are needed to achieve a desired precision, thereby minimizing the overall computational overhed. This is a direct advantage over methods requiring extensive sampling.

-

Mitigation of Barren Plateaus: Unlike strategies that modify classifier structure or training procedures, this approach acts as an independent mechanism for reducing finite sampling error. It is effective regardless of whether the system exhibits a barren plateau, a common challenge in variational quantum algorithms [8,30]. This provides a robust solution without altering the underlying QML model.

-

Resource Efficiency and Convergence Rate: The Bidirectional Quantum Refrigerator (BQR) protocols employ a cyclic operation where enhanced qubits are extracted, and the remaining $n-1$ qubits are recycled as the working body for subsequent cooling rounds. This recycling mechanism substantially reduces the total qubit resources required to prepare multiple enhanced qubits (from $n + m N_{rounds}$ to $\sim m N_{rounds} + 1$) and significantly improves the convergence rate of the cooling process.

-

Hardware Feasibility (k-local BQR): The introduction of the k-local BQR variant is a crucial structural advantage for practical implementation. By restricting compression operations to k-local neighborhoods, the protocol becomes significantly more hardware-freindly and easier to implement on current NISQ devices, while still retaining the core performance benefits of the more general BQR protocols. In some configurations, the k-local BQR can even outperform the Progressive Boundary Entropy Compression BQR in error-probability reduction.

-

Noise Resilience: The protocol's design, which operates entirely within the subspace of diagonal states (resets, permutations, and conditional swaps preserve diagonality), makes its population dynamics inherently insensative to small stochastic fluctuations. Crucially, noise processes that act solely on quantum coherences (e.g., dephasing, phase-damping, control-phase fluctuations) have no measurable influence on the outcome, as they leave diagonal states invariant throughout the evolution. This intrinsic robustness against coherence-degrading noise is a significant qualitative advantage for NISQ compatibility.

Alignment with Constraints

The chosen method perfectly aligns with the harsh requirements and constraints inherent in QML, forming a "marriage" between problem and solution:

-

Finite Sampling Errors: The primary motivation for this work is to reduce finite sampling errors. The BQR protocols directly address this by increasing the polarization magnitude of the measured qubit, which in turn reduces the number of measurements needed to achieve a desired estimation precision. This is the central problem the method is designed to solve.

-

NISQ Device Compatibility: The protocol is explicitly designed for NISQ devices. Its avoidance of complex operations like Grover iterations and quantum phase estimation, combined with the development of hardware-friendly k-local compression unitaries, makes it practical and efficient for current quantum hardware. The numerical simulations under realistic NISQ noise conditions further demonstrate its robustness and suitability.

-

Unknown Polarization Sign: A critical constraint for QML classification is that the sign of the classification score (polarization) is unknown a priori. Conventional algorithmic cooling fails here. The proposed "bidirectional cooling" is specifically engineered to enhance polarization magnitude regardless of its initial sign, thereby preserving the sign and enabling correct classification without prior knowledge.

-

Computational Overhead: The method directly minimizes computational overhead by significantly reducing the number of measurement shots required for accurate classification scores and gradients. The recycling of qubits further optimizes resource usage, making the overall process more efficient than repeated, independent measurements.

-

Barren Plateaus: The approach provides an independent mechanism to reduce sampling error, which is beneficial even in the presence of barren plateaus. It does not require altering the variational circuit structure or training strategy, thus complementing existing barren plateau mitigation techniques.

Rejection of Alternatives

The paper explicitly or implicitly rejects several popular approaches due to their fundamental limitations in addressing the specific problem constraints:

-

Quantum Amplitude Estimation (QAE): QAE was rejected primarily due to its high resource cost and impracticality for NISQ devices. It requires multiple rounds of Grover-like operations [13,14], which are notoriously demanding on current quantum hardware [15,16]. Furthermore, QAE provides an estimation of magnitude that is often excessive for classification tasks, where only the sign of the polarization is needed. Its complexity outweighs the benefit for this particular problem.

-

Conventional Algorithmic Cooling (AC) / Heat-Bath Algorithmic Cooling (HBAC): These traditional cooling techniques were rejected because they are unidirectional and require prior knowledge of the target state's bias. Conventional AC protocols are designed to increase the population of a predetermined basis state (e.g., $|0\rangle$). In QML classification, however, the sign of the classification score $\alpha(x, \theta)$ (which determines the correct label) is unknown before measurement. Therefore, a unidirectional cooling method that assumes a known bias direction cannot achieve the goal of enhancing polarization while preserving an unknown sign.

-

Locally Unitary Processes on a Single Qubit: The authors noted that simply applying a locally unitary process to the single measured qubit would be insufficient because such an operation cannot alter the purity of the qubit's state [Section III]. To increase polarization, the entropy of the qubit must be reduced, which necessitates interaction with other qubits and a dissipative process, going beyond a single-qubit unitary transformation.

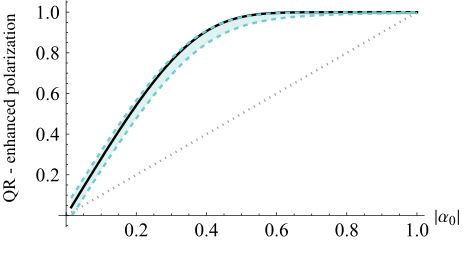

FIG. 10. Enhanced polarization αQR of the quantum refrig- erator, with the progressive boundary entropy compression- bidirectional quantum refrigerator protocol operating with n = 5 qubits and Nrounds = 5 as a function of the initial polarization |α0|, under typical noise parameters representative of current noisy intermediate-scale quantum devices. The dotted straight line indicates the baseline initial polarization, while the solid black curve corresponds to the ideal noise-free enhancement. The green zone shows the expected polarization in the presence of typical noise channels—generalized amplitude damping and depolarizing noise

FIG. 10. Enhanced polarization αQR of the quantum refrig- erator, with the progressive boundary entropy compression- bidirectional quantum refrigerator protocol operating with n = 5 qubits and Nrounds = 5 as a function of the initial polarization |α0|, under typical noise parameters representative of current noisy intermediate-scale quantum devices. The dotted straight line indicates the baseline initial polarization, while the solid black curve corresponds to the ideal noise-free enhancement. The green zone shows the expected polarization in the presence of typical noise channels—generalized amplitude damping and depolarizing noise

Mathematical & Logical Mechanism

The Master Equation

The paper's core mechanism for enhancing sampling efficiency in Quantum Machine Learning (QML) is the Bidirectional Quantum Refrigerator (BQR) protocol. Specifically, the Progressive Boundary Entropy Compression BQR (PBEC-BQR) describes a cyclic process. The absolute core equation that captures one full round of this mechanism, showing how the total quantum state evolves, is given by:

$$ \rho_T^{\text{QR round}}(\rho_T) := \text{Tr}_m[U_{\text{QR}}(n)\rho_T U_{\text{QR}}^\dagger(n)] \otimes \rho_a^{\otimes m} $$

This equation, found as Eq. (19) in the paper, describes the transformation of the total quantum state $\rho_T$ of an $n$-qubit register after one round of the PBEC-BQR protocol. It encapsulates both the unitary entropy compression and the dissipative thermalization steps.

Term-by-Term Autopsy

Let's dissect each component of this master equation to understand its mathematical definition, physical/logical role, and the rationale behind the chosen mathematical operations.

-

$\rho_T$:

- Mathematical Definition: This is the density matrix representing the total quantum state of the $n$-qubit register before a round of the BQR. It is a positive-semidefinite Hermitian operator with trace equal to one.

- Physical/Logical Role: $\rho_T$ is the input state to a single round of the quantum refrigerator. It contains the quantum information of all $n$ qubits, including the target qubit whose polarization is to be enhanced, the auxiliary qubits that assist in entropy redistribution, and the reset qubits that will absorb entropy. It's essentially the "working fluid" of the cooling cycle.

- Why used: Density matrices are the standard formalism in quantum mechanics to describe the state of a quantum system, particularly when it is in a mixed state (a statistical ensemble of pure states) or entangled with other systems. This is crucial for modeling realistic quantum systems, especially those interacting with a thermal bath.

-

$\rho_T^{\text{QR round}}(\rho_T)$:

- Mathematical Definition: This is the density matrix representing the total quantum state of the $n$-qubit register after one full round of the BQR protocol. It is the output state of the transformation.

- Physical/Logical Role: This is the updated state of the quantum refrigerator after it has performed one cycle of cooling. The goal of the protocol is that, within this output state, the target qubit's polarization magnitude has increased, making it "colder" or more biased. This state then serves as the input for the next round in a cyclic operation.

- Why used: This notation clearly indicates that the state $\rho_T$ undergoes a transformation defined by the "QR round" operation, yielding a new state.

-

$U_{\text{QR}}(n)$:

- Mathematical Definition: This is a global unitary operator acting on the $n$-qubit register. For the PBEC-BQR, it is composed of a sequence of local unitary operations, $U_{C_j}$, applied in a staircaselike manner (e.g., $U_{\text{QR}}(n) = U_{C_n} (I_2 \otimes U_{C_{n-1}}) \dots (I_2^{\otimes (n-3)} \otimes U_{C_3})$ as shown in Eq. (B7)).

- Physical/Logical Role: This unitary operation performs the "entropy compression" step. It coherently redistributes entropy across the entire $n$-qubit register, effectively extracting entropy from the target and auxiliary qubits and concentrating it into the reset qubits. The key innovation here is its bidirectional nature: it amplifies the magnitude of the target qubit's polarization ($|\alpha|$) while preserving its original sign, without requiring prior knowledge of that sign. This is the core "cooling" action.

- Why used: Unitary operations are fundamental in quantum mechanics, representing reversible transformations that preserve the total entropy of a closed system. By carefully designing this unitary, the authors can manipulate the entropy distribution within the system, achieving the desired polarization enhancement. The use of a sequence of local unitaries makes the protocol more experimentally feasible compared to a single, complex global unitary.

-

$U_{\text{QR}}^\dagger(n)$:

- Mathematical Definition: This is the Hermitian conjugate (adjoint) of the unitary operator $U_{\text{QR}}(n)$.

- Physical/Logical Role: In quantum mechanics, when a unitary operator $U$ acts on a density matrix $\rho$, the transformation is given by $U \rho U^\dagger$. The adjoint ensures that the transformed state remains a valid density matrix (Hermitian, positive-semidefinite, and trace-preserving).

- Why used: This is a mathematical necessity for correctly applying unitary transformations to density matrices.

-

$\text{Tr}_m[\dots]$:

- Mathematical Definition: This denotes the partial trace operation over the $m$ reset qubits. If the total system is composed of two subsystems, $A$ and $B$, and its state is $\rho_{AB}$, then $\text{Tr}_B[\rho_{AB}]$ yields the reduced density matrix of subsystem $A$.

- Physical/Logical Role: This operation represents the effective "removal" or "discarding" of the $m$ reset qubits from the system after they have absorbed entropy from the target and auxiliary qubits. It's the first part of the dissipative step, preparing the system for thermalization.

- Why used: Partial trace is the correct mathematical tool to obtain the reduced state of a subsystem when the total system is in a mixed or entangled state. Here, it allows us to focus on the state of the target and auxiliary qubits, effectively isolating them from the "hot" reset qubits.

-

$\otimes$:

- Mathematical Definition: This is the tensor product operator, used to combine quantum states of independent subsystems.

- Physical/Logical Role: This operator combines the reduced state of the remaining $n-m$ qubits (after tracing out the old reset qubits) with the $m$ fresh, thermalized reset qubits ($\rho_a^{\otimes m}$). This represents the "thermalization" or "reset" step, where the accumulated entropy is expelled into a heat bath by replacing the "hot" reset qubits with "cold" ones.

- Why used: The tensor product is used because the newly introduced reset qubits are assumed to be prepared independently in the thermal state $\rho_a$, and thus their state is uncorrelated with the remaining $n-m$ qubits.

-

$\rho_a^{\otimes m}$:

- Mathematical Definition: This represents the state of $m$ identical qubits, each prepared in the thermal bath state $\rho_a$. The $\otimes m$ indicates a tensor product of $m$ copies of $\rho_a$.

- Physical/Logical Role: These are the "fresh" or "cold" qubits that are introduced into the system to replace the "hot" reset qubits. They act as the heat bath, absorbing the entropy concentrated in the reset qubits during the unitary step and effectively resetting them to a low-entropy state. This replenishment is crucial for the cyclic operation of the refrigerator, allowing it to continuously extract entropy.

- Why used: This term models the dissipative part of the cooling cycle, where the system interacts with an external environment (the heat bath) to expel entropy. The assumption of identical thermal bath qubits simplifies the modeling of this reset process.

Step-by-Step Flow

Let's trace the exact lifecycle of a single abstract data point, represented by its initial polarization $\alpha$, as it passes through one round of this quantum refrigeration mechanism.

- Initial State Preparation: An abstract data point, characterized by its feature vector $x$, is encoded into the polarization $\alpha$ of a quantum state. This state, along with $n-1$ other qubits (auxiliary and reset qubits), forms the initial $n$-qubit register, $\rho_T$. For simplicity, imagine the target qubit is the first one, and the remaining $n-1$ qubits are prepared in some initial state, often thermal.

- Entropy Compression (Unitary Step): The entire $n$-qubit register, $\rho_T$, is subjected to a carefully designed global unitary operation, $U_{\text{QR}}(n)$. Think of this as a quantum sorting machine. This unitary coherently shuffles the populations of the computational basis states across all qubits. Its primary job is to extract entropy from the target qubit and the auxiliary qubits, concentrating this "heat" into a specific subset of $m$ "reset" qubits. The magic here is that this unitary is "bidirectional": it doesn't care if the target qubit's initial polarization $\alpha$ is positive or negative. It simply amplifies its magnitude, turning $\alpha$ into $\alpha'$ such that $|\alpha'| > |\alpha|$, while preserving the original sign of $\alpha$. This makes the target qubit "colder" or more polarized.

- Entropy Expulsion (Partial Trace): After the unitary operation, the $m$ reset qubits, which have now become "hotter" due to the concentrated entropy, are effectively isolated and removed from the system. Mathematically, we perform a partial trace, $\text{Tr}_m[\dots]$, over these $m$ qubits. This leaves us with a reduced quantum state for the remaining $n-m$ qubits, which now includes the enhanced target qubit and the auxiliary qubits. This step prepares the system for the replenishment of "cold" qubits.

- Thermalization/Reset (Qubit Replacement): To complete the round and ensure the refrigerator can operate continuously, $m$ brand-new, "cold" qubits, each prepared in a thermal bath state $\rho_a$, are introduced. These fresh qubits are then combined with the remaining $n-m$ qubits via a tensor product ($\otimes \rho_a^{\otimes m}$). This action effectively "resets" the refrigerator's capacity by expelling the accumulated entropy into an external heat bath (represented by the fresh qubits). The system is now back to an $n$-qubit register, but with a target qubit that has an enhanced polarization.

- Recycling for Next Round: The newly formed $n$-qubit state becomes the input $\rho_T$ for the next round of the BQR. The enhanced target qubit can be extracted for measurement, or the process can be repeated for multiple rounds ($N_{\text{rounds}}$) to achieve even greater polarization enhancement. The remaining $n-1$ qubits (auxiliary and fresh reset qubits) are recycled to prepare subsequent target qubits, making the protocol resource-efficient.

This iterative process ensures that the target qubit's polarization is progressively boosted, making the classification signal stronger and more reliable.

Optimization Dynamics

The BQR mechanism optimizes the performance of quantum machine learning by directly addressing the challenge of finite sampling errors, which arise from the probabilistic nature of quantum measurements. It does this not by adjusting model parameters in the traditional sense, but by preprocessing the quantum states to enhance the signal-to-noise ratio of classification scores.

- Loss Landscape and Gradient Reliability: In QML, the classification score $q(x, \theta) = \alpha(x, \theta)$ is estimated from a finite number of measurements. The training process typically involves minimizing a loss function (e.g., hinge loss) by iteratively updating model parameters $\theta$ based on the gradient $\frac{dl}{d\theta}$ (Eq. (8)). If the magnitude of the polarization $|\alpha(x, \theta)|$ is small, the statistical fluctuations from finite measurements can easily obscure the true sign of $\alpha$, leading to incorrect classification labels or, critically, misdirected gradient updates. This makes the "loss landscape" effectively very flat or noisy, hindering efficient optimization.

- Polarization Enhancement as "Signal Amplification": The BQR's core "optimization" is to increase the magnitude of this polarization,

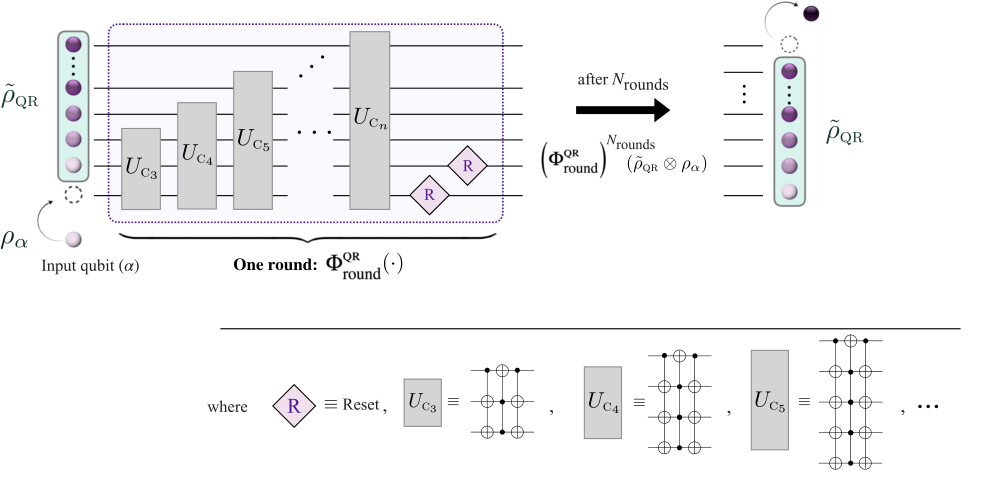

FIG. 4. Bidirectional quantum refrigerator. Circuit diagram of the progressive boundary entropy compression-bidirectional quantum refrigerator protocol acting on an n-qubit register with m reset qubits (m = 2 shown). The unitary stage consists of a stairlike sequence of unitaries UC3, . . . , UCn, where each UCj performs a boundary entropy-compression step by swapping the states |0⟩|1⟩⊗(j −1) and |1⟩|0⟩⊗(j −1) on the last j qubits. After the unitary stage, the m reset qubits are refreshed, completing one round of the protocol. The protocol runs for Nrounds to prepare the target qubit; once prepared, the target qubit is extracted ready for the classification task, and the process restarts by recycling the remaining n −1 qubits to prepare subsequent target qubits

FIG. 4. Bidirectional quantum refrigerator. Circuit diagram of the progressive boundary entropy compression-bidirectional quantum refrigerator protocol acting on an n-qubit register with m reset qubits (m = 2 shown). The unitary stage consists of a stairlike sequence of unitaries UC3, . . . , UCn, where each UCj performs a boundary entropy-compression step by swapping the states |0⟩|1⟩⊗(j −1) and |1⟩|0⟩⊗(j −1) on the last j qubits. After the unitary stage, the m reset qubits are refreshed, completing one round of the protocol. The protocol runs for Nrounds to prepare the target qubit; once prepared, the target qubit is extracted ready for the classification task, and the process restarts by recycling the remaining n −1 qubits to prepare subsequent target qubits

Results, Limitations & Conclusion

Experimental Design & Baselines

To rigorously validate their mathematical claims, the authors meticulously designed a series of simulated experiments using Qiskit and scikit-learn, focusing on the practical classification performance of their Bidirectional Quantum Refrigerator (BQR) protocols. The core objective was to demonstrate that enhancing the polarization of a measurement qubit through BQR directly translates to tangible improvements in classification accuracy, particularly by mitigating finite sampling errors.

The experimental architecture centered on a conservative choice: the three-local BQR protocol (k=3), operating with $n=5$ system qubits, $m=2$ reset qubits, and $N_{rounds}=2$ cooling rounds. This specific configuration was chosen because if even this more practical, k-local version could outperform baselines, the full BQR protocol (which offers stronger cooling) would be expected to perform even better.

The "victims" or baseline models against which the BQR was ruthlessly tested were conventional sampling methods, which do not employ any BQR enhancement. To ensure a fair comparison and isolate the impact of finite sampling error (which BQR aims to reduce), the baseline method was allocated proportionally more measurement shots. Specifically, if the BQR-enhanced classifier used $k_{BQR}$ measurement shots (e.g., 10 or 100 shots to reflect realistic NISQ constraints), the conventional baseline was given $k_c = k_{BQR} \times m \times (N_{rounds} - 1) + n$ shots. For $k_{BQR}=10$, this meant the baseline received $10 \times 2 \times (2-1) + 5 = 25$ shots. For $k_{BQR}=100$, the baseline received $100 \times 2 \times (2-1) + 5 = 205$ shots. This scaling ensured that both approaches consumed comparable qubit resources, making any observed performance difference attributable to the BQR's efficiency rather than simply more raw measurements.

To further isolate the effect of sampling error from the inherent expressivity or trainability of QML models, the problem setup was simplified. The experiments assumed direct access to the final quantum state in the form of a single-qubit reduced density matrix, where its Z-polarization directly encoded the classification signal. This allowed for a clear assessment of how BQR improves the reliability of predictions from fewer measurements.

The experiments utilized a diverse range of datasets for binary classification tasks, including synthetic datasets (Uniform and Gaussian distributions) and several real-world datasets: Iris, Wine, Handwritten digits (specifically 2 vs 5), Sonar, and Pima Indians Diabetes. For each dataset, 50 data points were randomly sampled from each class to create balanced tasks, and this entire sampling and evaluation procedure was repeated 100 times to generate a robust statistical ensemble.

Crucially, the authors also tested the protocols under realistic noisy intermediate-scale quantum (NISQ) conditions. Numerical simulations incorporated generalized amplitude damping (GAD) and depolarizing channels, which model energy relaxation and stochastic gate errors, respectively. Two noise regimes were considered: a "typical NISQ regime" with moderate noise parameters and a "worst-case regime" with deliberately exaggerated noise strengths to stress-test the protocol's robustness. This comprehensive experimental design aimed to provide undeniable evidence of the BQR's efficacy and practicality.

What the Evidence Proves

The evidence presented in the paper definitively proves that the Bidirectional Quantum Refrigerator (BQR) protocols, particularly the three-local BQR, significantly enhance sampling efficiency and improve classification accuracy in quantum machine learning, even under realistic noise conditions. The core mechanism of increasing the magnitude of a target qubit's polarization while preserving its sign was shown to work in reality, leading to a substantial reduction in the number of measurements required for accurate classification.

The most compelling evidence comes from Table I, which summarizes the classification accuracy across all tested datasets. In every single instance, the BQR-enhanced classifier consistently outperformed the conventional sampling baseline. For example, on the Uniform dataset with $k_{BQR}=10$ shots, the BQR achieved an accuracy of $95.8\% \pm 1.8\%$, while the baseline (with 25 shots) only reached $93.1\% \pm 2.3\%$. When $k_{BQR}$ was increased to 100 shots (baseline at 205 shots), the BQR achieved $99.3\% \pm 0.7\%$ accuracy, compared to the baseline's $97.7\% \pm 1.3\%$. This pattern of superior performance by BQR was observed across all synthetic and real-world datasets, including Iris, Wine, Handwritten digits, Sonar, and Diabetes. The statistical significance of these improvements was rigorously confirmed by Welch's t-tests, which yielded p-values less than 0.05 in all cases, indicating that the observed gains were not due to random chance.

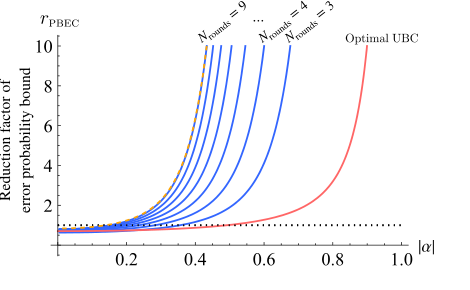

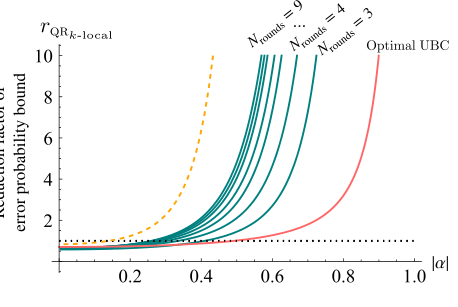

Beyond raw accuracy, the paper provides graphical evidence of the underlying mechanism's success. Figures 5 and 6 illustrate the enhanced polarization ($\alpha_{PBEC}'$) and the reduction factor of the error-probability bound ($r_{PBEC}$) for the Progressive Boundary Entropy Compression BQR (PBEC-BQR) in an ideal, noise-free setting. These figures show that the BQR markedly increases the polarization magnitude and substantially reduces the error bound, especially in the intermediate and high-polarization regimes. This directly validates the mathematical claim that entropy reduction leads to improved statistical estimates.

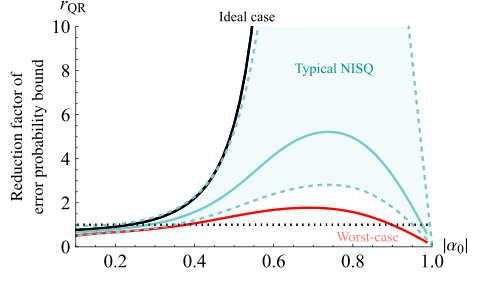

Furthermore, the robustness of the BQR against noise, a critical concern for NISQ devices, was thoroughly demonstrated. Figure 10 shows that even under typical NISQ-level noise, the BQR still achieves significant polarization enhancement, converging to a steady state that remains close to the ideal noise-free performance. Figure 11 quantifies this by showing the reduction factor of the error-probability bound ($r_{QR}$) under typical NISQ noise. Despite some asymmetry introduced by amplitude damping (which drives polarization towards one direction), the overall reduction factor remains substantial, proving that the protocol effectively mitigates sampling errors even in imperfect quantum hardware. Finally, Figure 12 provides a powerful testament to the protocol's resilience, showing that even under a "worst-case" noise regime—with deliberately exaggerated noise strengths—the BQR continues to yield a reduction in the effective error probability over a wide range of initial polarizations. This undeniable evidence confirms that the BQR's core mechanism works in practice, offering a physically grounded and noise-resilient pathway to enhanced learning performance in QML.

FIG. 12. Reduction factor of the error-probability bound for the five-qubit progressive boundary entropy compression- bidirectional quantum refrigerator with Nrounds = 5, evaluated under both the typical noisy intermediate-scale quantum noise regime (green curve) and the worst-case regime (solid red line). Even under the deliberately exaggerated noise strengths of the worst-case scenario, the protocol continues to yield an enhance- ment over nearly the same range of initial polarizations as in the ideal case, demonstrating its robustness against realistic and strongly dissipative noise conditions

FIG. 12. Reduction factor of the error-probability bound for the five-qubit progressive boundary entropy compression- bidirectional quantum refrigerator with Nrounds = 5, evaluated under both the typical noisy intermediate-scale quantum noise regime (green curve) and the worst-case regime (solid red line). Even under the deliberately exaggerated noise strengths of the worst-case scenario, the protocol continues to yield an enhance- ment over nearly the same range of initial polarizations as in the ideal case, demonstrating its robustness against realistic and strongly dissipative noise conditions

Limitations & Future Directions

While the Bidirectional Quantum Refrigerator (BQR) protocols present a significant advancement for QML, the authors candidly acknowledge several limitations and propose compelling avenues for future research.

One notable limitation is the performance in the low-polarization regime. Both the Unitary Bidirectional Cooling (UBC) and BQR protocols do not provide a meaningful improvement when the initial polarization $\alpha$ is very close to zero. While the region of advantage can be expanded by optimizing protocol parameters, the extreme low-polarization limit remains a challenging area. This suggests that for certain types of data or model states, the benefits of BQR might be minimal, prompting a need for alternative or complementary strategies in such scenarios.

Another open question revolves around the optimality of the PBEC-BQR protocol. The paper does not definitively establish whether this specific cooling protocol is truly optimal for reducing finite sampling errors. If it is not, there's a clear direction for future work to refine the protocol further, potentially leading to even greater performance gains.

From a resource perspective, while BQR schemes are more practical than a single global unitary UBC, they still require additional qubits to sustain the cyclic rounds of cooling. This adds to the qubit overhead compared to a single-shot UBC. Future work could explore methods to minimize this overhead or investigate trade-offs between qubit count, circuit depth, and cooling performance. The paper also notes that while a single global unitary on all qubits would achieve the best polarization enhancement, such an operation is not practical to implement. This highlights the ongoing tension between theoretical optimality and experimental feasibility in quantum computing.

A significant area for future development lies in extending the applicability to quantum kernel estimation. The current method primarily aids in sign estimation for binary outcomes, which is not directly sufficient for estimating kernel matrix elements. Adapting these cooling techniques to reduce finite sampling errors in quantum kernels presents unique challenges, as the quantities to be estimated are more complex. Addressing this would broaden the impact of quantum thermodynamics insights across a wider spectrum of QML applications.

Looking forward, several exciting discussion topics emerge:

- Harnessing Coherence and Nonclassical Correlations: The paper mentions investigating how coherence and nonclassical correlations within the system and bath qubits could be leveraged to improve cooling efficiency. This is a fascinating direction, as current protocols primarily operate within the diagonal subspace, making them robust to dephasing noise but potentially leaving untapped resources for further enhancement.

- Barren Plateau Mitigation: A detailed quantitative analysis of how BQR mitigates the barren plateau effect is warranted. The authors suggest that their technique can be employed as an independent mechanism in conjunction with existing strategies for barren plateau mitigation. Understanding this interplay could lead to more robust and scalable QML algorithms.

- Data Reuploading and BQR Circuits: The connection between the BQR method and data reuploading techniques opens up intriguing possibilities. Constructing a data reuploading version of the BQR circuit could allow for a comprehensive trade-off analysis between circuit depth and qubit overhead, potentially leading to more resource-efficient implementations.

- Beyond Binary Classification: While the current work focuses on binary classification, the underlying thermodynamic framework for entropy reduction could potentially be adapted to other QML tasks, such as regression or more complex multi-class problems. This would require careful consideration of how "polarization" and "sign" generalize in these contexts.

- Experimental Realization and Hardware Optimization: The numerical simulations under NISQ noise are promising, but actual experimental realization on various quantum hardware platforms (superconducting, trapped-ion, neutral-atom) would be the ultimate validation. This would also provide valuable feedback for optimizing BQR circuits for specific hardware architectures and noise characteristics.

- Theoretical Foundations of Cooling for QML: The work establishes a novel connection between quantum thermodynamics and QML. Further theoretical exploration into the fundamental limits of cooling for learning tasks, and potential isomorphisms with other fields (as hinted in the canonical chapter order), could yield deeper insights and inspire entirely new algorithmic paradigms.

These diverse perspectives highlight that the BQR protocols are not just a practical solution for current QML challenges but also a fertile ground for interdisciplinary research, pushing the boundaries of quantum information science and machine learning.

FIG. 6. Reduction factor of the error-probability bound for the progressive boundary entropy compression-bidirectional quan- tum refrigerator with n = 5 qubits, shown in blue for different numbers of rounds as a function of the initial |α|. The pink line shows the performance of unitary bidirectional cooling, and the yellow dashed line corresponds to an adaptive bidirec- tional quantum refrigerator using optimal per-round compres- sions (shown here for Nrounds = 9). The progressive boundary entropy compression-bidirectional quantum refrigerator, despite using identical rounds, closely matches the adaptive scheme for n = 5, m = 2, and Nrounds = 9—whereas deviations from this number of rounds lead to a visible performance gap (not shown)

FIG. 6. Reduction factor of the error-probability bound for the progressive boundary entropy compression-bidirectional quan- tum refrigerator with n = 5 qubits, shown in blue for different numbers of rounds as a function of the initial |α|. The pink line shows the performance of unitary bidirectional cooling, and the yellow dashed line corresponds to an adaptive bidirec- tional quantum refrigerator using optimal per-round compres- sions (shown here for Nrounds = 9). The progressive boundary entropy compression-bidirectional quantum refrigerator, despite using identical rounds, closely matches the adaptive scheme for n = 5, m = 2, and Nrounds = 9—whereas deviations from this number of rounds lead to a visible performance gap (not shown)

FIG. 8. Reduction factor of the error-probability bound for the three-local bidirectional quantum refrigerator with n = 5, shown in green for different numbers of rounds as a function of the ini- tial |α|. The pink line shows the improvement achieved through single-shot unitary bidirectional cooling on an n = 5 register, while the yellow dashed line indicates the upper bound obtained from simulations of the adaptive-round bidirectional quantum refrigerator with Nrounds = 9. Although the performance of the three-local bidirectional quantum refrigerator shows a noticeable gap relative to this upper bound—reflecting its reduced optimal- ity—it remains significantly more practical to implement while still achieving substantial reductions in the error-probability bound

FIG. 8. Reduction factor of the error-probability bound for the three-local bidirectional quantum refrigerator with n = 5, shown in green for different numbers of rounds as a function of the ini- tial |α|. The pink line shows the improvement achieved through single-shot unitary bidirectional cooling on an n = 5 register, while the yellow dashed line indicates the upper bound obtained from simulations of the adaptive-round bidirectional quantum refrigerator with Nrounds = 9. Although the performance of the three-local bidirectional quantum refrigerator shows a noticeable gap relative to this upper bound—reflecting its reduced optimal- ity—it remains significantly more practical to implement while still achieving substantial reductions in the error-probability bound